A theoretical physicist's detailed assessment of Anthropic's Claude 4.5 Opus model reveals surprising capabilities in scientific research assistance, with performance comparable to a second-year graduate student and workflow acceleration approaching 10x.

What the Physicist Found

Matthew Schwartz, a theoretical physicist at Harvard University, conducted an extensive evaluation of Claude 4.5 Opus for physics research tasks. In a guest post highlighted by AI researcher Alex Albert, Schwartz reported that the AI model performed at "roughly the level of a second-year grad student" across various physics research activities.

The most significant finding was the acceleration factor: Schwartz claims the AI helped him accelerate his research workflow by approximately 10x. This acceleration appears to come from the model's ability to handle routine computational tasks, literature review, and preliminary analysis that typically consume significant researcher time.

Context and Significance

This assessment comes from an established physicist rather than an AI company's marketing team, lending credibility to the performance claims. Schwartz's evaluation focused on practical research applications rather than standardized benchmarks, providing insight into real-world utility for scientific work.

The "second-year grad student" comparison is particularly meaningful in academic contexts, suggesting the model can handle intermediate-level research tasks with supervision and guidance from senior researchers. This represents a significant advancement from earlier AI systems that struggled with the nuanced reasoning required in theoretical physics.

Limitations and Caveats

While Schwartz reported substantial acceleration, the assessment doesn't specify which research tasks benefited most from AI assistance. Theoretical physics encompasses diverse activities including mathematical derivations, literature synthesis, code development, and conceptual reasoning—each with different AI suitability.

The 10x acceleration claim, while dramatic, likely represents best-case scenarios for well-defined tasks rather than across-the-board productivity gains. As with any AI tool, effectiveness depends heavily on the researcher's ability to formulate appropriate prompts and validate outputs.

Broader Implications for Scientific Research

Schwartz's experience suggests that current frontier models like Claude 4.5 Opus have reached a threshold where they can meaningfully contribute to advanced scientific research. This development could potentially reshape how theoretical work is conducted, particularly in fields requiring extensive literature review and preliminary calculations.

The acceleration factor, if replicable across research domains, could significantly impact scientific productivity and discovery timelines. However, the assessment doesn't address whether AI assistance leads to fundamentally new insights versus simply accelerating existing workflows.

gentic.news Analysis

Schwartz's assessment represents one of the first credible, domain-expert evaluations of frontier AI models for advanced scientific research. The "second-year grad student" benchmark is particularly telling—it suggests these models have moved beyond simple pattern matching to genuine reasoning capabilities within constrained domains. What's most significant here isn't the absolute performance level, but the acceleration factor: 10x improvements in research velocity are transformative if sustainable across tasks.

This development points toward an emerging paradigm where AI doesn't replace researchers but dramatically amplifies their capabilities. The comparison to graduate students is apt—these models appear to function as tireless, instantly accessible research assistants who can handle routine tasks while human researchers focus on high-level conceptual work. However, we should be cautious about extrapolating from a single physicist's experience to broader scientific domains. Theoretical physics, with its heavy mathematical formalism, may be particularly well-suited to current AI capabilities compared to experimental sciences requiring physical intuition or observational skills.

Looking forward, the most interesting question is whether this acceleration leads to qualitatively different research approaches or simply faster iteration of existing methodologies. If researchers can test 10x more hypotheses in the same time, we might see more exploratory, high-risk research directions becoming feasible. The next frontier will be AI systems that don't just accelerate existing workflows but suggest novel approaches human researchers might not consider—true co-discovery rather than mere acceleration.

Frequently Asked Questions

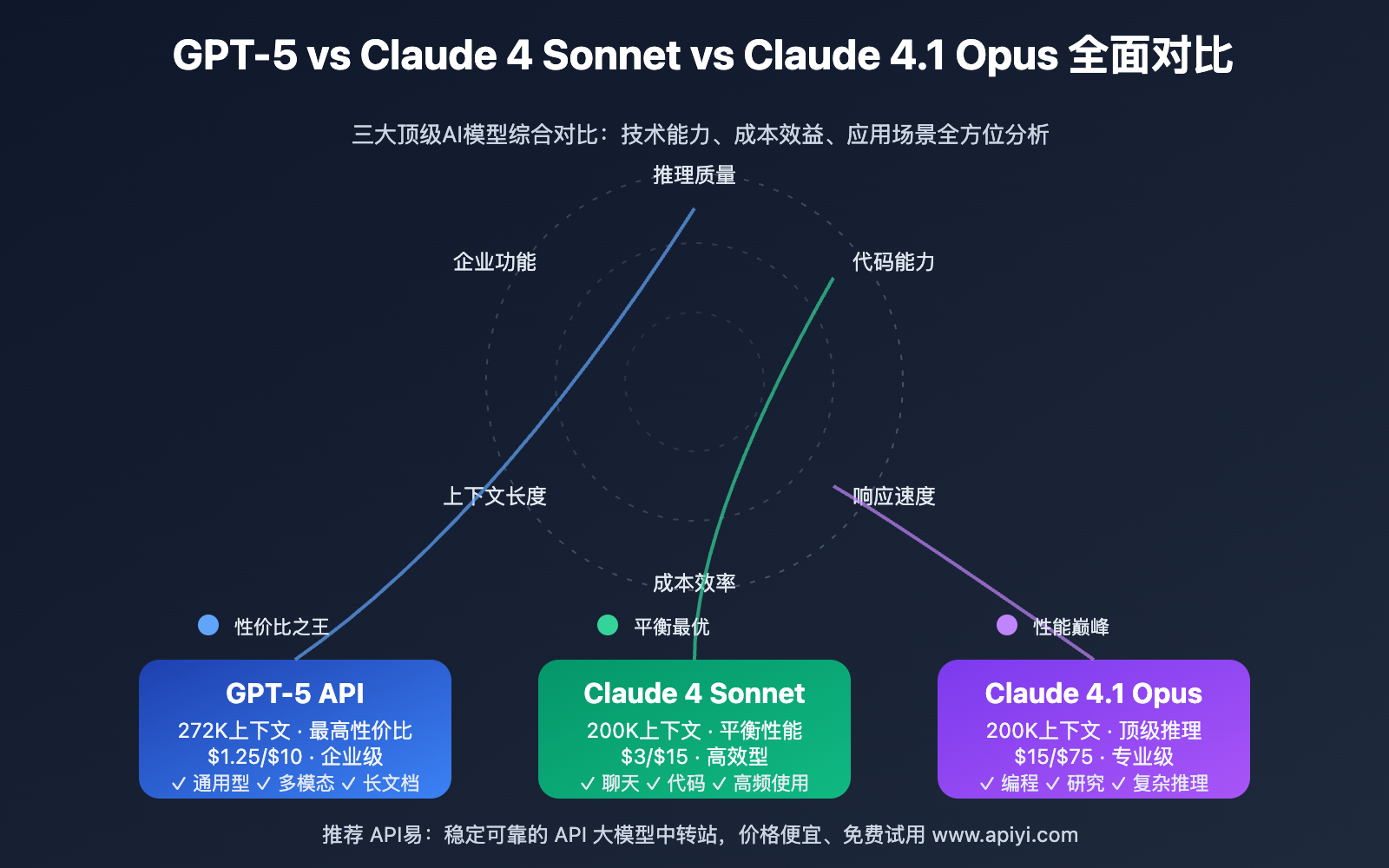

How does Claude 4.5 Opus compare to other AI models for scientific research?

While direct comparative benchmarks for physics research aren't provided in Schwartz's assessment, Claude 4.5 Opus appears to perform at a level that makes it practically useful for advanced scientific work. Other frontier models like GPT-4 and Gemini Ultra likely have similar capabilities, but Schwartz's specific evaluation focused on Claude's performance in theoretical physics contexts. The key finding is that at least one current frontier model has reached sufficient capability to meaningfully accelerate research workflows.

What types of physics research tasks is AI most helpful for?

Based on the assessment, AI appears most valuable for tasks that involve routine mathematical derivations, literature review and synthesis, code development for simulations or calculations, and preliminary analysis of results. These are typically time-consuming activities that don't require the deepest conceptual insights but benefit from systematic execution. The AI likely struggles with truly novel conceptual breakthroughs or developing entirely new theoretical frameworks.

Can AI really accelerate research by 10x, and is this sustainable?

The 10x acceleration likely represents best-case scenarios for well-defined, repetitive tasks rather than across-the-board productivity gains. For certain workflow components like literature review or standard calculations, such acceleration is plausible. However, the most creative and conceptually challenging aspects of research probably see less dramatic improvements. The sustainability of these gains depends on researchers developing effective prompting strategies and maintaining critical evaluation of AI outputs.

Should physics graduate students be concerned about AI replacing them?

Not according to Schwartz's assessment—he compares the AI to a second-year graduate student, suggesting it complements rather than replaces human researchers. The AI appears best suited as a tool that handles routine tasks, allowing human researchers (including graduate students) to focus on higher-level conceptual work. If anything, this development might change the skill set required for successful research, emphasizing AI collaboration and prompt engineering alongside traditional physics expertise.