Key Takeaways

- This article provides a practical overview of building real-time recommendation systems, covering core components like data ingestion, feature stores, and model serving.

- It matters because real-time personalization is becoming a baseline expectation in digital commerce.

What Happened

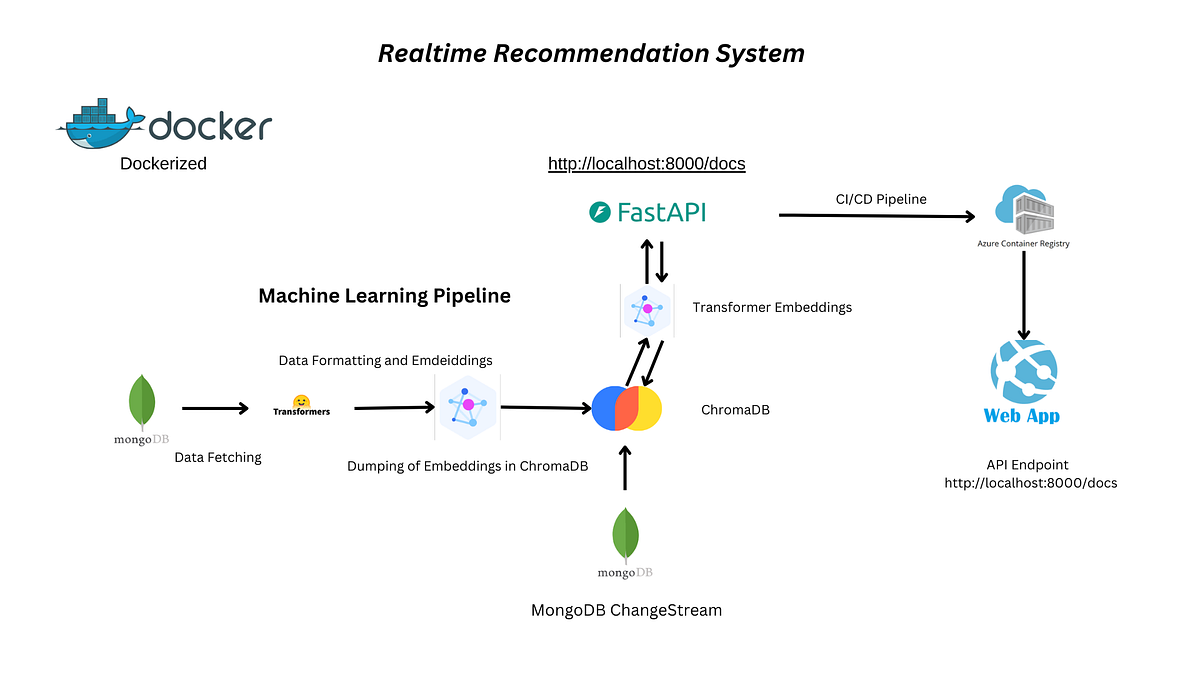

A new technical guide, published on Medium, provides a practical overview of the architecture and components required to build a real-time recommendation system. The source explicitly positions these systems as a cornerstone for personalized experiences in e-commerce and streaming, making its relevance to retail direct and unambiguous. The guide moves beyond high-level concepts to discuss the tangible infrastructure needed to shift from batch-based to real-time personalization.

Technical Details: The Real-Time Stack

The core argument is that modern user expectations demand immediacy. A recommendation should reflect a user's most recent click, view, or purchase, not their behavior from hours or days ago. The guide breaks down the key pillars of a real-time system:

- Data Ingestion & Stream Processing: The foundation is a low-latency pipeline (using tools like Apache Kafka, Flink, or Spark Streaming) that continuously consumes user interaction events (clicks, adds-to-cart, dwell time).

- Real-Time Feature Store: This is critical. The system must compute and serve fresh features—like a user's last five viewed product IDs or a session-level intent score—within milliseconds for model inference. This contrasts with a batch feature store updated only periodically.

- Model Serving & Inference: The recommendation model itself must be deployed in a low-latency serving environment (e.g., TensorFlow Serving, TorchServe, or cloud-native solutions) capable of making predictions using the freshly computed real-time features.

- Orchestration & Ranking: The final step often involves a ranking layer that blends the real-time model's output with other business rules (profitability, inventory) to produce the final ordered list of recommendations.

The guide implicitly highlights the complexity trade-off: real-time systems offer superior relevance and agility but require significant investment in streaming infrastructure and operational monitoring to handle stateful, continuous data processing.

Retail & Luxury Implications

For luxury and retail leaders, this is not speculative future tech—it's the operational backbone of competitive personalization. The implications are concrete:

- Abandonment Recovery: A user browsing high-value handbags could be shown a complementary wallet or strap in real-time within the same session, directly influencing conversion.

- Cross-Channel Cohesion: In-store clienteling apps can update a sales associate's tablet with real-time recommendations based on a client's recent online browsing, creating a seamless omnichannel experience.

- Event-Driven Marketing: A surge in views for a new sneaker collection can trigger real-time adjustments to homepage placements and email campaign content for user segments showing intent.

- Dynamic Lookbooks: Digital lookbooks or styling advice can adapt in real-time as a user interacts with different items, building a personalized outfit.

The business case is clear: real-time recommendations can directly increase average order value, conversion rate, and customer engagement by making every interaction contextually relevant. The gap for most organizations is not in the machine learning models themselves—many already use effective collaborative filtering or embedding-based techniques—but in building the robust, scalable data pipelines required to feed those models with live signals.

Implementation Approach & Complexity

![[EBOOK][BEST]} Building Reco…](https://miro.medium.com/v2/resize:fit:1000/1*DcrsY0c8YWN9obfI19VCcg.jpeg)

Implementing this is a major platform engineering undertaking, not just an ML project. It requires:

- Mature Data Infrastructure: A reliable event-streaming backbone is non-negotiable.

- MLOps Evolution: Teams must adopt practices for continuous training and deployment of models that consume real-time features.

- Cross-Functional Teams: Close collaboration between data engineers, ML engineers, and platform teams is essential.

For a luxury brand, a pragmatic path might start with a "near-real-time" system (latency of seconds to a minute) for key user journeys before investing in full sub-second real-time capabilities.

Governance & Risk Assessment

Real-time systems amplify both opportunity and risk:

- Privacy & Data Sensitivity: Processing clickstream data in real-time requires stringent governance. Anonymization and compliance with data residency laws (like GDPR) must be engineered into the streaming pipeline itself.

- Bias & Feedback Loops: A real-time system can rapidly amplify biases (e.g., over-recommending already popular items). Robust A/B testing and continuous monitoring for fairness are crucial.

- System Resilience: A failure in the real-time pipeline can directly degrade the customer experience. Investment in high availability, fallback strategies (to a batch model), and comprehensive monitoring is a business imperative.

gentic.news Analysis

This guide fits squarely into an ongoing strategic shift we are tracking. Recommender Systems is a core research topic we've covered in 12 prior articles, indicating its sustained importance to our audience. The focus here on real-time execution is the logical next step following recent research into more powerful modeling techniques. For instance, our coverage of the "IAT: Instance-As-Token Compression" paper (2026-04-13) addressed efficient modeling of long user sequences—a key capability for understanding context. The findings from the "No Saturation Point for Data" study (2026-04-09) reinforce that better data, including fresher real-time signals, continues to improve model performance.

The practical guide bridges the gap between that advancing academic research and production reality. It underscores that the competitive edge in luxury retail personalization will increasingly be determined by engineering excellence in data infrastructure—the ability to operationalize research insights at speed and scale. The mention of Git in our knowledge graph, a tool mentioned in 6 prior articles, is a subtle reminder: building these complex systems is fundamentally a software engineering challenge requiring robust version control and collaboration, aligning with the guide's practical, implementation-focused tone. The trend is clear: the battlefield is moving from model algorithms to model infrastructure.