The Missing Piece in Claude Code Learning Systems

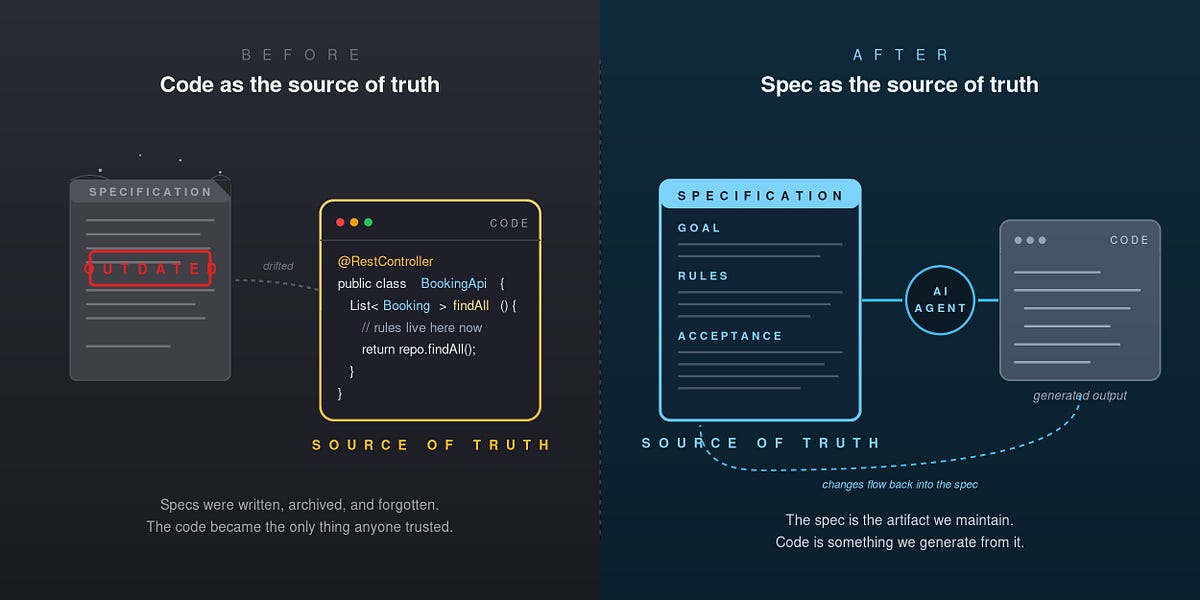

If you're using terraphim-agent to capture Claude Code's failed commands and build a learning database, you've solved half the problem. The system captures mistakes, stores corrections, and lets you query them. But until now, there was no way to prove Claude was actually following those corrections.

This changed when the terraphim team implemented verification sweeps inspired by Meta Alchemist's viral guide on self-evolving Claude Code systems. The key insight: rules without verification are just suggestions. Rules with verification are guardrails.

What Verification Sweeps Actually Do

Verification sweeps add machine-checkable enforcement to your learned rules. Instead of hoping Claude remembers "always use uv instead of pip," you add a grep pattern that gets checked at session start:

# In .claude/memory/learned-rules.md

- Never use pip, pip3, or pipx; always use uv instead.

verify: Grep("pip install|pip3 install|pipx install", path="automation/") -> 0 matches

[source: CLAUDE.md convention, terraphim-agent learning #4, 2026-03-30]

The verification layer runs before your Claude Code session begins, checking every rule with a verify: pattern. If grep finds violations, you get a report showing exactly which rules failed and where.

How terraphim-agent's Approach Differs

While Meta Alchemist's guide builds everything from scratch with JSONL files, terraphim-agent integrates verification into its existing Rust CLI infrastructure. This avoids the split-brain problem of having two learning systems.

Here's what they added:

- learned-rules.md with verify patterns - Graduated rules live in

.claude/memory/learned-rules.mdwith machine-checkable grep patterns - Verification shell script - A thin shell layer that runs grep checks against your codebase

- Session scorecard generation - Quantitative tracking of rules checked, passed, and violated

Setup Verification in 3 Steps

If you already have terraphim-agent configured with Claude Code hooks:

Step 1: Create learned-rules.md

mkdir -p .claude/memory

cat > .claude/memory/learned-rules.md << 'EOF'

# Learned Rules

Rules graduated from terraphim-agent corrections and CLAUDE.md conventions.

Each rule has a `verify:` pattern checked by the /boot verification sweep.

---

- Never use pip, pip3, or pipx; always use uv instead.

verify: Grep("pip install|pip3 install|pipx install", path="automation/") -> 0 matches

[source: CLAUDE.md convention, terraphim-agent learning #4, 2026-03-30]

- Never use npm, yarn, or pnpm; always use bun instead.

verify: Grep("npm install|yarn add|pnpm add", path="automation/") -> 0 matches

[source: CLAUDE.md convention, terraphim KG hook replacement, 2026-03-30]

EOF

Step 2: Add verification script to CLAUDE.md

## Verification Sweep

Before starting any significant work, run the verification sweep:

```bash

./scripts/verify-rules.sh

This checks all learned rules with verify: patterns and reports violations.

**Step 3: Create the verification script**

```bash

mkdir -p scripts

cat > scripts/verify-rules.sh << 'EOF'

#!/bin/bash

# Verification sweep for learned rules

RULES_FILE=".claude/memory/learned-rules.md"

TOTAL=0

PASSED=0

FAILED=0

echo "Running verification sweep..."

echo ""

while IFS= read -r line; do

if [[ "$line" =~ verify:\ Grep\("(.*)",\ path="(.*)"\)\ -\>\ ([0-9]+)\ matches ]]; then

pattern="${BASH_REMATCH[1]}"

path="${BASH_REMATCH[2]}"

expected="${BASH_REMATCH[3]}"

((TOTAL++))

matches=$(grep -r "$pattern" "$path" 2>/dev/null | wc -l)

if [[ "$matches" -eq "$expected" ]]; then

echo "✅ PASS: '$pattern' in $path (found $matches, expected $expected)"

((PASSED++))

else

echo "❌ FAIL: '$pattern' in $path (found $matches, expected $expected)"

((FAILED++))

fi

fi

done < "$RULES_FILE"

echo ""

echo "Scorecard: $PASSED/$TOTAL passed, $FAILED violations"

EOF

chmod +x scripts/verify-rules.sh

Why This Beats Manual Rule Enforcement

The verification layer solves three critical problems:

- Context window limitations - Claude might forget rules when context fills up

- Inconsistent application - Different Claude instances might interpret rules differently

- No audit trail - You can't prove rules are being followed

With verification sweeps, you get machine-enforced consistency. The grep patterns run against your actual codebase, not Claude's memory.

Graduation Process: From Capture to Enforcement

Here's the complete workflow:

- Capture: terraphim-agent logs failed commands from Claude Code

- Correct: You add corrections via

terraphim-agent learn correct - Verify: Add grep patterns to graduated rules

- Enforce: Verification sweeps run at session start

- Audit: Scorecards track compliance over time

This follows Anthropic's philosophy of building Claude Code as an agentic tool that can be extended through the Model Context Protocol (MCP) ecosystem. terraphim-agent's verification layer is essentially an MCP-like extension that adds machine-checkable guardrails.

What They Deliberately Didn't Copy

The terraphim team rejected auto-promotion (where corrections automatically become rules after two occurrences). Why? Context matters. A correction that's right for one project might be wrong for another. Instead, they require explicit graduation with CTO approval.

They also avoided the all-JSONL approach, sticking with terraphim-agent's structured file storage with frontmatter. This maintains a single source of truth instead of creating parallel learning systems.

Start Small, Verify Everything

Begin with 2-3 critical rules:

- Package manager preferences (uv over pip, bun over npm)

- Security patterns (no hardcoded secrets)

- Code style violations

Run verification sweeps weekly, then daily. Watch your scorecard improve as Claude internalizes the verified rules. The system becomes self-evolving: capture mistakes, add corrections, verify compliance, repeat.