In a significant advancement for artificial intelligence research, a team has developed what they describe as a "minimax optimal strategy" for reinforcement learning (RL) with delayed state observations. Published on arXiv on March 3, 2026, the research addresses a critical limitation that has hampered RL deployment in real-world applications where perfect, instantaneous feedback is impossible.

The Delay Problem in Real-World AI

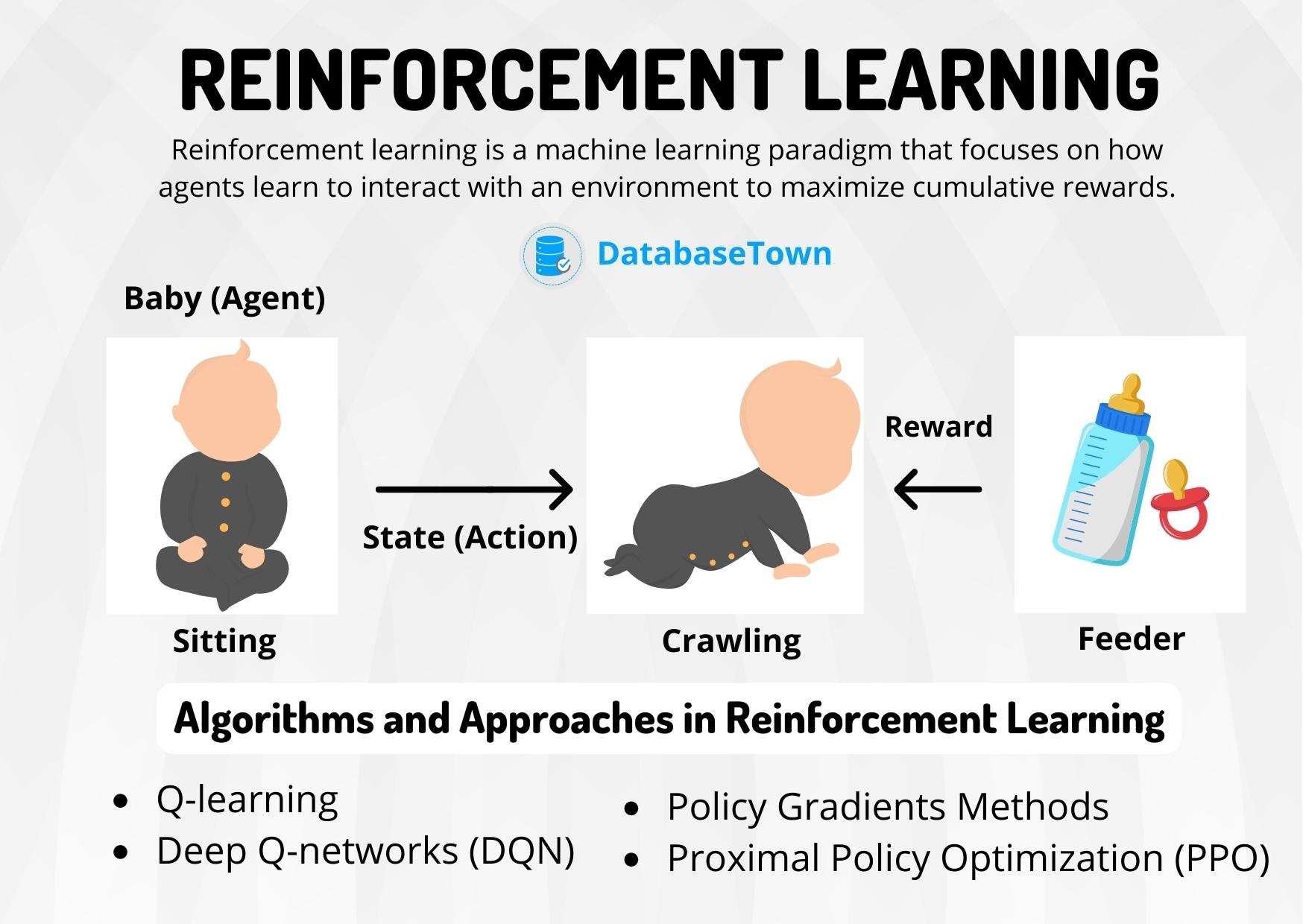

Reinforcement learning, where AI agents learn optimal behaviors through trial-and-error interactions with environments, has achieved remarkable successes in controlled settings like game playing and simulations. However, real-world applications—from autonomous vehicles to industrial robotics—inevitably involve delays between actions and observations. Sensors have processing time, communication networks introduce latency, and computational resources are finite.

"The agent observes the current state after some random number of time steps," the researchers explain in their abstract. This creates a fundamental mismatch between theoretical RL models and practical implementations. Previous approaches either ignored delays (leading to poor performance) or used suboptimal workarounds with significant performance penalties.

The Algorithmic Breakthrough

The proposed solution combines two established techniques in novel ways: the augmentation method and the upper confidence bound (UCB) approach. The augmentation method transforms the delayed observation problem into a larger Markov Decision Process (MDP) where delays become part of the state representation. The UCB approach then provides the exploration-exploitation balance needed for efficient learning.

For tabular MDPs—a fundamental class of RL problems with discrete states and actions—the team derived a regret bound of $\tilde{\mathcal{O}}(H \sqrt{D_{\max} SAK})$. This mathematical expression represents the algorithm's performance guarantee, where:

- $S$ and $A$ are state and action space sizes

- $H$ is the time horizon

- $K$ is the number of learning episodes

- $D_{\max}$ is the maximum delay length

The $\tilde{\mathcal{O}}$ notation indicates optimal scaling up to logarithmic factors, meaning the algorithm performs as well as theoretically possible given the problem constraints.

Proving Optimality

Perhaps most significantly, the researchers provided a matching lower bound demonstrating that no algorithm can perform better than their approach (again, up to logarithmic factors). This "minimax optimality" proof establishes their solution as fundamentally solving the delayed observation problem for this class of RL challenges.

"We establish general results for this abstract setting, which may be of independent interest," the authors note, suggesting their analytical framework could apply to other RL problems beyond just delayed observations.

Broader Implications for AI Development

The research arrives at a critical moment for reinforcement learning. Recent arXiv publications have highlighted growing concerns about AI benchmark saturation and safety limitations. Just days before this paper, arXiv published studies showing "nearly half of major AI benchmarks are saturated" (February 20) and revealing "critical flaws in AI safety where text safety doesn't translate to action safety" (February 20).

This delay-robust RL approach addresses both concerns simultaneously. By creating algorithms that perform optimally under realistic constraints, researchers move beyond artificial benchmarks toward practical deployment. The safety implications are equally significant—systems that properly account for observation delays are less likely to make catastrophic errors in time-sensitive applications.

Technical Innovation and Future Directions

The algorithm's architecture represents a sophisticated balance between theoretical elegance and practical implementability. By formulating delayed observation RL as "a special case of a broader class of MDPs where their transition dynamics decompose into a known component and an unknown but structured component," the researchers created a framework that others can build upon.

This work connects to other recent arXiv publications, including a March 3 study on "Novel RL approach provides probabilistic stability guarantees with finite data samples" and a February 26 paper showing "structured reasoning frameworks dramatically improve AI performance on complex reasoning tasks." Together, these represent a growing trend toward theoretically grounded, practically robust AI systems.

Real-World Applications

The implications span numerous domains:

Autonomous Systems: Self-driving cars must account for sensor processing delays when making split-second decisions. This algorithm provides provable guarantees about performance under such conditions.

Industrial Robotics: Manufacturing robots with communication delays between sensors and controllers can maintain optimal performance.

Healthcare AI: Diagnostic systems that integrate delayed lab results or imaging data can learn optimal decision policies despite temporal gaps in information.

Financial Trading: Algorithmic trading systems must account for market data latency while making rapid decisions.

Challenges and Limitations

While theoretically optimal for tabular MDPs, real-world applications often involve continuous or extremely large state spaces. The researchers acknowledge that extending their approach to function approximation settings (like deep reinforcement learning) remains an important direction for future work.

Additionally, the current analysis assumes delays are bounded by $D_{\max}$. Unbounded delays or extremely long delays would require different approaches. The random delay model, while more realistic than fixed delays, still represents a simplification of complex real-world latency patterns.

The Research Context

This publication continues arXiv's role as the premier venue for rapid dissemination of cutting-edge AI research. Despite not being peer-reviewed, arXiv has become essential reading for AI researchers, with recent high-impact publications on benchmark limitations, safety concerns, and now fundamental algorithmic advances.

The timing is particularly noteworthy given increasing calls for more rigorous theoretical foundations in AI. As benchmarks become saturated and safety concerns grow, mathematically grounded approaches like this delayed observation algorithm provide a path forward toward reliable, deployable AI systems.

Conclusion

The development of a minimax optimal algorithm for delayed observation reinforcement learning represents a significant milestone in making AI systems robust to real-world constraints. By providing both an implementable algorithm and proof of its optimality, the researchers have addressed a long-standing gap between RL theory and practice.

As AI systems move from controlled environments to complex real-world applications, accounting for inevitable delays becomes increasingly critical. This research provides both a specific solution and a broader framework for thinking about structured uncertainty in learning systems—advancing not just delayed observation RL, but the entire field of robust machine learning.

Source: arXiv:2603.03480v1 "Minimax Optimal Strategy for Delayed Observations in Online Reinforcement Learning" (March 3, 2026)