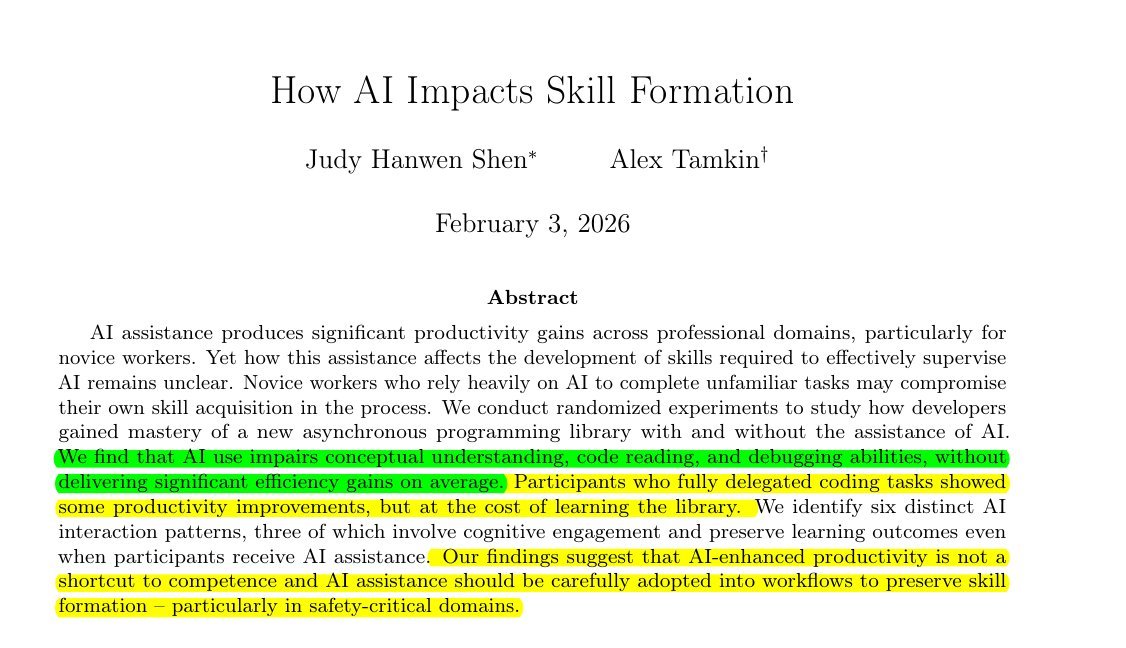

A new study from AI safety company Anthropic reveals that using AI coding assistants like those enabling "vibe-coding"—where developers delegate substantial code generation to AI—can significantly impair fundamental programming skills. The research, titled "How AI Impacts Skill Formation," suggests these tools may come with hidden costs for developer education and long-term competency.

The Study's Key Findings

The Anthropic research examined how developers learning a new Python library performed with and without AI assistance. The results were striking: developers using AI scored 17% lower on skill assessment tests compared to those learning without AI assistance. This performance gap suggests that relying on AI for code generation interferes with the learning process essential for building programming expertise.

Perhaps most concerning was the finding that participants who let AI write everything scored below 40% on assessments, while those who only asked AI for simple concepts scored above 65%. This indicates that the degree of AI reliance directly correlates with skill impairment, with complete delegation producing the worst outcomes.

Efficiency Gains Prove Elusive

Contrary to popular assumptions about AI productivity tools, the study found that using AI did not make programmers statistically faster at completing tasks. Instead, participants often wasted time writing prompts rather than actually coding, suggesting that the promised efficiency benefits of AI coding assistants may be overstated for many development scenarios.

"AI use impairs conceptual understanding, code reading, and debugging abilities, without delivering significant efficiency gains on average," the researchers concluded. This challenges the narrative that AI tools universally enhance developer productivity, particularly for those still building foundational skills.

The Skill Erosion Problem

The research highlights a fundamental tension in modern software development: delegating code generation to AI may prevent developers from actually understanding the software they're creating. This has serious implications for long-term maintenance and debugging capabilities.

"Forcing top speed means workers lose the ability to debug systems later," the study warns. This finding suggests that pressure to maximize short-term productivity through AI tools could create technical debt in the form of underdeveloped developer skills, potentially compromising software quality and maintainability.

Implications for Development Teams and Management

The Anthropic study carries important implications for how development teams integrate AI tools. The researchers caution that "managers should not pressure engineers to use AI for endless productivity," as this approach may ultimately harm both individual developer growth and team capabilities.

Instead, the research suggests more nuanced approaches to AI adoption, where tools are used selectively for specific tasks rather than as wholesale replacements for human coding. The performance difference between developers who used AI minimally (for simple concepts) versus extensively (for complete code generation) indicates that strategic, limited use may preserve learning outcomes while still providing some assistance.

Broader Context in AI-Assisted Development

This research arrives amid growing debate about the long-term effects of AI on professional skills across multiple domains. While previous studies have focused primarily on productivity metrics, Anthropic's work represents one of the first systematic examinations of how AI affects skill formation itself.

The findings align with broader educational research showing that outsourcing cognitive work to tools can interfere with learning. Just as calculators can hinder mathematical understanding if introduced too early, AI coding assistants may disrupt the development of programming intuition and problem-solving abilities.

Future Research Directions

The study, available on arXiv (arxiv.org/abs/2601.20245), opens several important questions for future investigation. Researchers will need to examine whether these effects persist with more experienced developers, how different types of AI assistance affect various programming skills, and whether modified approaches to AI tool design could mitigate these negative impacts.

Additionally, the study raises questions about how AI coding assistants might be optimized to support rather than replace skill development—potentially through features that encourage understanding rather than mere code generation.

Conclusion

Anthropic's research provides crucial evidence that the rush to adopt AI coding tools requires more careful consideration of their educational impacts. While these tools offer undeniable capabilities, their potential to undermine fundamental skill development suggests organizations should implement them thoughtfully, with attention to preserving learning opportunities and maintaining deep technical understanding among their development teams.

The study serves as a reminder that technological advancement should enhance rather than diminish human capabilities, and that the most valuable tools are those that augment rather than replace essential skills.