What Happened

A new technical guide, published on the Medium platform, provides a code-first walkthrough for deploying an Azure Machine Learning (Azure ML) workspace using Terraform. The article positions the Azure ML workspace as the foundational hub for all machine learning activities, including running experiments, managing models, deploying endpoints, and orchestrating pipelines. The core premise is that by defining this critical infrastructure as code (IaC) with Terraform, teams can achieve reproducible, version-controlled, and automated provisioning of their ML platform on Microsoft Azure.

The guide likely addresses the initial setup complexity, noting that an Azure ML workspace requires four dependent Azure resources to be provisioned first. Using Terraform streamlines this by codifying the dependencies and their configurations, allowing for consistent environment creation across development, staging, and production. This approach is a cornerstone of modern MLOps, aiming to reduce manual errors and accelerate the path from experimentation to production.

Technical Details

While the full article is behind Medium's paywall, the summary indicates a focus on practical implementation. The key technical components involved are:

- Azure Machine Learning Workspace: The top-level resource that provides a centralized place to manage assets, compute, and data for ML projects.

- Terraform: An open-source infrastructure-as-code tool from HashiCorp. It uses declarative configuration files to manage cloud services, enabling teams to treat infrastructure like software—versioned, reusable, and collaborative.

- Dependent Azure Resources: The guide highlights that provisioning the workspace requires four other Azure services. These typically include:

- An Azure Resource Group for logical organization.

- An Azure Storage Account (blob or file) for storing datasets, experiment outputs, and trained models.

- An Azure Key Vault for managing secrets, such as credentials and connection strings.

- An Azure Application Insights resource for monitoring and logging the performance of deployed models and pipelines.

By defining all these interconnected resources in Terraform modules (.tf files), practitioners can execute a single command (terraform apply) to spin up a complete, compliant ML foundation. This eliminates the manual, error-prone process of clicking through the Azure portal and ensures every environment is identical.

Retail & Luxury Implications

For retail and luxury AI teams, the implications are about operational maturity and scalability, not a specific AI model. The ability to reliably stand up and tear down ML platforms is a prerequisite for executing the sophisticated use cases the industry demands.

- Rapid Experimentation & A/B Testing: Teams working on dynamic pricing algorithms, visual search models, or next-generation recommendation systems need isolated, identical environments to test new ideas. Terraform-managed workspaces allow data scientists to self-serve a sandbox in minutes, not days.

- Governance and Compliance: Luxury brands handling sensitive customer data (e.g., for hyper-personalization) require strict controls. Infrastructure-as-Code enforces compliance by baking security settings (like private network endpoints for the workspace) directly into the approved Terraform templates, preventing configuration drift.

- Cost Control and Efficiency: ML compute (like GPU clusters for training vision models on product imagery) is expensive. Terraform enables precise control, allowing teams to define auto-shutdown schedules for compute instances directly in code, turning costly resources off when not in use and reducing cloud spend.

- Team Scalability: As AI initiatives grow from a single team to a center of excellence, a standardized, automated platform setup is critical. New team members can deploy a fully-configured workspace with all necessary permissions and connections on their first day, dramatically reducing onboarding friction.

The gap between this guide and production is minimal for infrastructure setup—it's a proven pattern. The real challenge for retail lies in what you build on top of this platform: curating high-quality data pipelines, developing domain-specific models, and implementing robust monitoring for models in production.

gentic.news Analysis

This article is part of a clear and valuable trend on Medium: the publication of dense, practical technical guides aimed at AI and MLOps practitioners. This follows Medium's recent publication of guides on RAG deployment bottlenecks, a decision framework for LLM customization, and a code-first walkthrough for fine-tuning with Direct Preference Optimization (DPO)—a technology that has appeared in 3 articles this week alone, indicating high practitioner interest. The platform is establishing itself as a key source for implementation knowledge, moving beyond theoretical discussion.

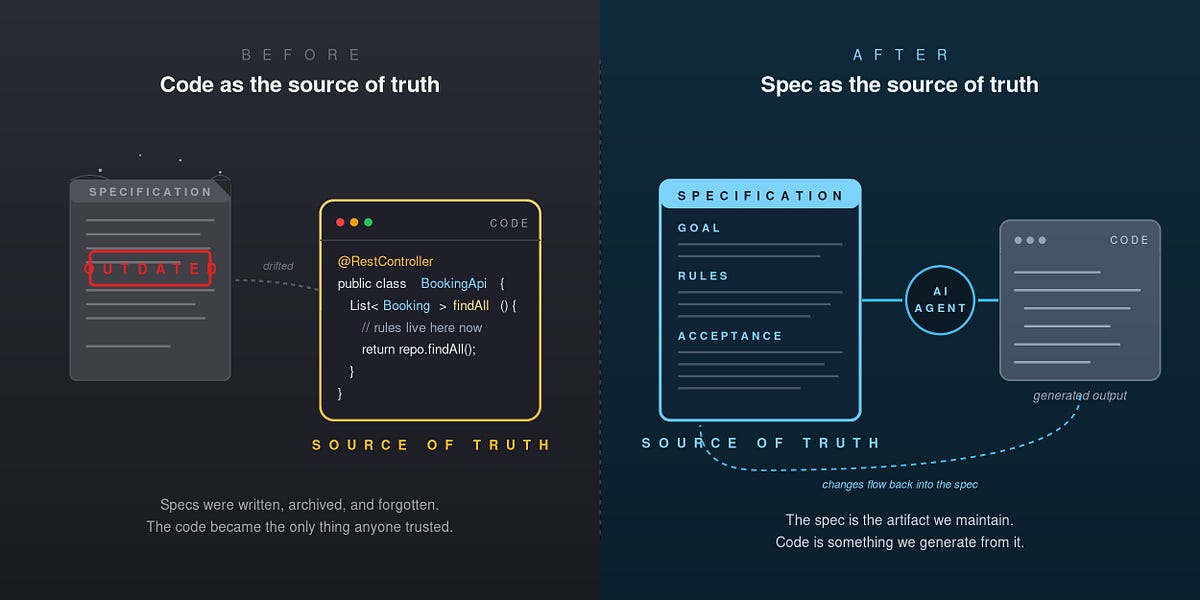

The guide's focus on Terraform aligns perfectly with the industry's shift towards treating ML infrastructure as software. We've covered related themes in our analysis of the [AI agent production gap](slug: the-ai-agent-production-gap-why-86), where a lack of robust engineering practices was cited as a major reason pilot projects fail. Automating foundational platform setup with IaC is a direct remedy to one of those engineering shortcomings.

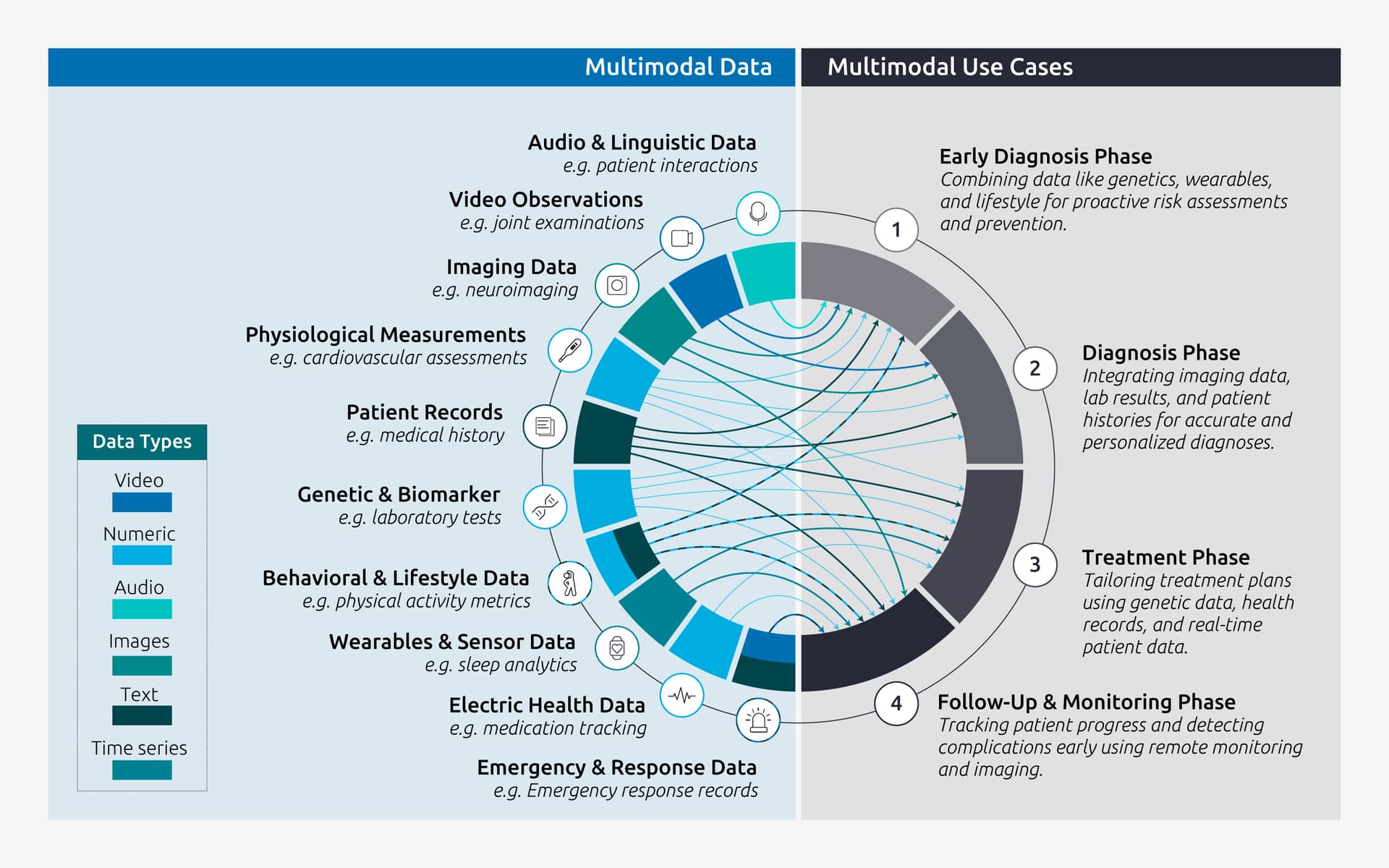

For a luxury brand's AI director, the value isn't in this specific Azure tutorial, but in recognizing that the tools and practices for industrial-grade AI are now well-documented and accessible. The next step is to apply this infrastructure-as-code discipline to the unique data assets and model workflows of the luxury domain, perhaps building upon platforms like Azure ML to deploy the sophisticated [multimodal sequential recommendation systems](slug: robust-dpo-with-stochastic) or fine-tuned LLMs for customer service that we've previously analyzed. The foundation must be solid before the bespoke AI applications can be reliably built.