For years, Retrieval-Augmented Generation (RAG) has been the go-to solution for enhancing large language models with external knowledge. By combining retrieval systems with generative AI, RAG allowed models to access current information beyond their training data. However, as detailed in a recent Towards AI article, this technology is undergoing a fundamental transformation. The next generation—Agentic RAG—represents a paradigm shift where the retrieval pipeline itself becomes an intelligent agent capable of making decisions about what, when, and how to retrieve information.

What Makes RAG "Agentic"?

Traditional RAG systems follow a predictable, linear flow: receive a query, retrieve relevant documents, generate a response. Agentic RAG breaks this mold by introducing decision-making capabilities at the retrieval stage. Instead of passively fetching documents based on simple similarity metrics, these systems can:

- Dynamically determine whether retrieval is even necessary for a given query

- Choose between different retrieval strategies based on query complexity

- Iteratively refine searches based on initial results

- Decide when to stop retrieving and begin generation

As the source article explains, this evolution addresses fundamental limitations of conventional RAG. While basic RAG gained prominence between 2020 and 2023, it's increasingly seen as limited, leading to the natural progression toward more sophisticated agent memory systems.

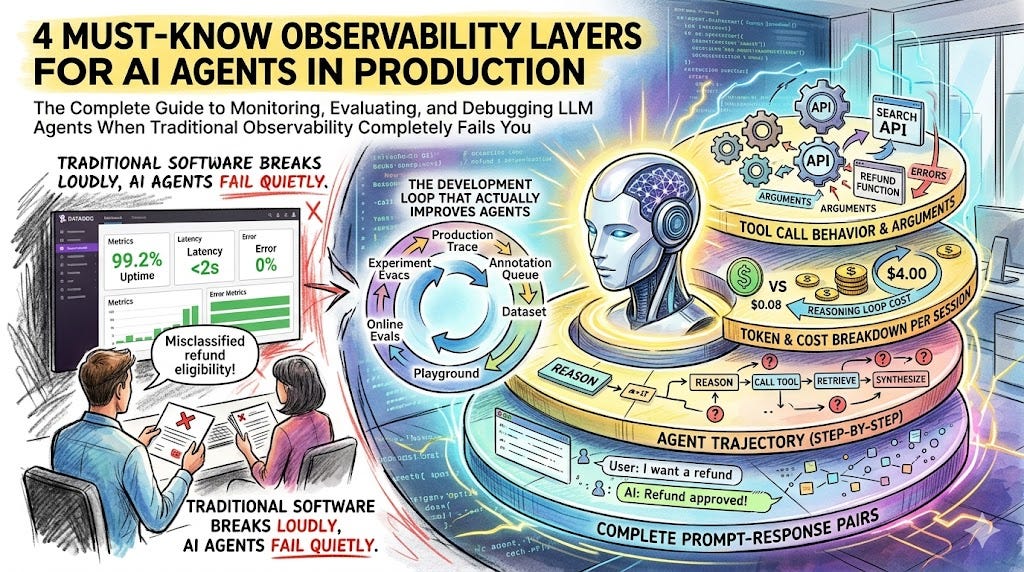

The 5-Layer Evaluation Framework

The core contribution highlighted in the source material is a comprehensive 5-layer evaluation framework specifically designed for Agentic RAG systems. Built from scratch using LangGraph and Ollama, this framework provides developers with structured metrics to assess:

- Agent Decision Quality: How well does the agent determine when to retrieve?

- Retrieval Strategy Selection: Does the agent choose appropriate retrieval methods?

- Information Synthesis: How effectively does the agent combine retrieved knowledge?

- Response Relevance: Does the final output address the original query?

- System Efficiency: What computational costs accompany the agent's decisions?

This framework represents a significant advancement because traditional RAG evaluation typically focuses only on retrieval accuracy and response quality, ignoring the decision-making process that defines agentic systems.

Why This Evolution Matters Now

Recent developments in the AI landscape make Agentic RAG particularly timely. As noted in recent analyses, compute scarcity is making AI increasingly expensive, forcing organizations to prioritize high-value tasks over widespread automation. Agentic RAG addresses this by making retrieval systems smarter and more efficient—only using computational resources when necessary.

Furthermore, the workplace impacts of AI are becoming clearer. Research reveals that AI creates a workplace divide, boosting experienced workers' productivity while potentially blocking the hiring of young talent. Agentic RAG systems, which require sophisticated oversight and integration, may accelerate this trend by demanding more skilled AI operators while automating routine retrieval tasks.

Technical Implementation and Challenges

Building Agentic RAG systems presents unique technical challenges. The source article describes implementation using LangGraph for orchestrating the agent's decision flows and Ollama for running local language models. This combination allows for:

- State management across multiple retrieval steps

- Conditional logic in retrieval pathways

- Local execution reducing API costs and latency

However, evaluating these systems requires new metrics beyond traditional retrieval recall and precision. Developers must now assess decision correctness, strategy appropriateness, and the cost-benefit tradeoffs of agentic behavior.

The Broader Context: RAG's Place in AI Evolution

This development occurs alongside other significant trends in AI infrastructure. Recent studies have validated retrieval metrics as proxies for RAG information coverage, providing better evaluation tools. Meanwhile, AI is beginning to appear in official productivity statistics, potentially resolving the long-standing productivity paradox where technology investments haven't consistently shown up in economic measurements.

Agentic RAG also relates to the competitive landscape of AI technologies. As noted in the knowledge graph context, RAG competes with vector databases while using techniques like contrastive learning and intent engineering. The move toward agentic systems represents an attempt to move beyond simple similarity search toward more intelligent knowledge management.

Practical Implications for Developers and Organizations

For organizations implementing RAG systems, the agentic approach offers several advantages:

- Reduced computational costs through smarter retrieval decisions

- Improved response quality through iterative refinement

- Better handling of complex queries requiring multi-step reasoning

However, these benefits come with increased complexity in development, evaluation, and maintenance. The 5-layer framework provides a starting point, but organizations will need to develop their own evaluation criteria based on specific use cases.

Looking Forward: The Future of Intelligent Retrieval

The evolution from static RAG to Agentic RAG represents more than just a technical improvement—it signals a shift toward more autonomous, decision-capable AI systems. As these systems mature, we can expect further integration with other AI advancements, potentially leading to:

- Self-optimizing retrieval systems that learn from past decisions

- Multi-agent RAG architectures with specialized retrieval agents

- Tighter integration with agent memory systems for persistent knowledge

This progression aligns with broader trends in AI toward systems that don't just execute predefined workflows but actively make decisions about how to accomplish their goals.

Source: Evaluating Agentic RAG: When Your Pipeline Starts Making Decisions on Towards AI