New research from Intuit AI Research challenges the conventional wisdom that AI system performance depends primarily on the capabilities of the AI agent itself. According to findings shared by researcher Omar Sar, agent performance "depends on more than just the agent" and is significantly influenced by environmental factors including data quality, task complexity, and system architecture.

The Holistic View of AI Performance

For years, the AI research community has focused heavily on improving individual components—particularly the core AI models themselves. This has led to remarkable advances in model architecture, training techniques, and parameter optimization. However, the Intuit research suggests this approach may be incomplete.

"It also depends on the quality..." Sar notes in his tweet, hinting at the broader ecosystem in which AI agents operate. This includes not just data quality but potentially interface design, integration with existing systems, user interaction patterns, and the specific business context in which the AI operates.

The Environmental Factors That Matter

While the full research paper hasn't been released publicly, the implications are significant for both researchers and practitioners. Several key environmental factors likely influence AI agent performance:

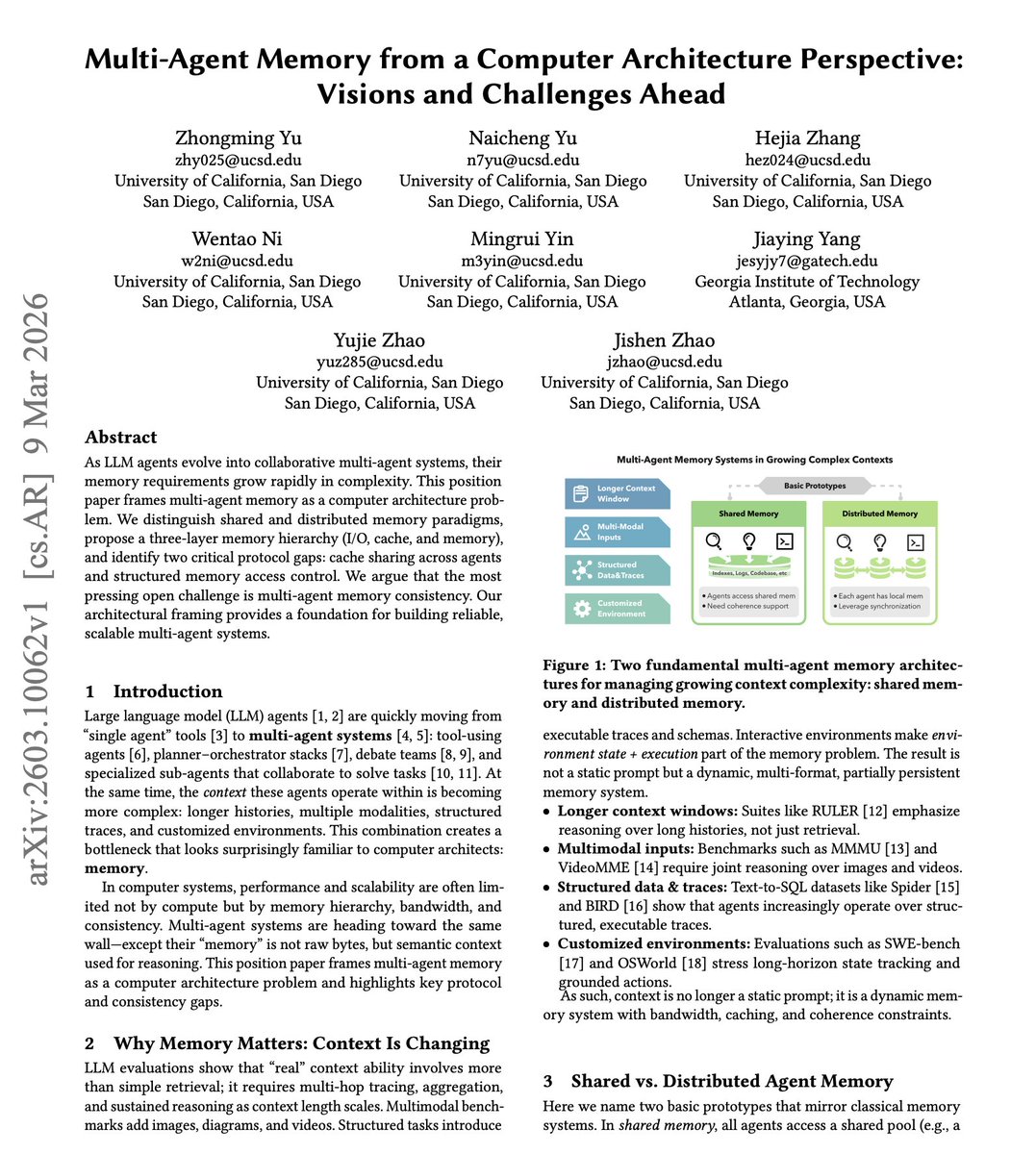

Data Quality and Context: An AI agent trained on pristine datasets may perform poorly when deployed in environments with noisy, incomplete, or biased real-world data. The gap between training data and operational data represents a critical performance determinant.

Task Complexity and Definition: How a task is framed and presented to an AI agent significantly impacts performance. Ambiguous instructions, poorly defined success metrics, or tasks that require significant domain expertise all affect how well an AI system can perform.

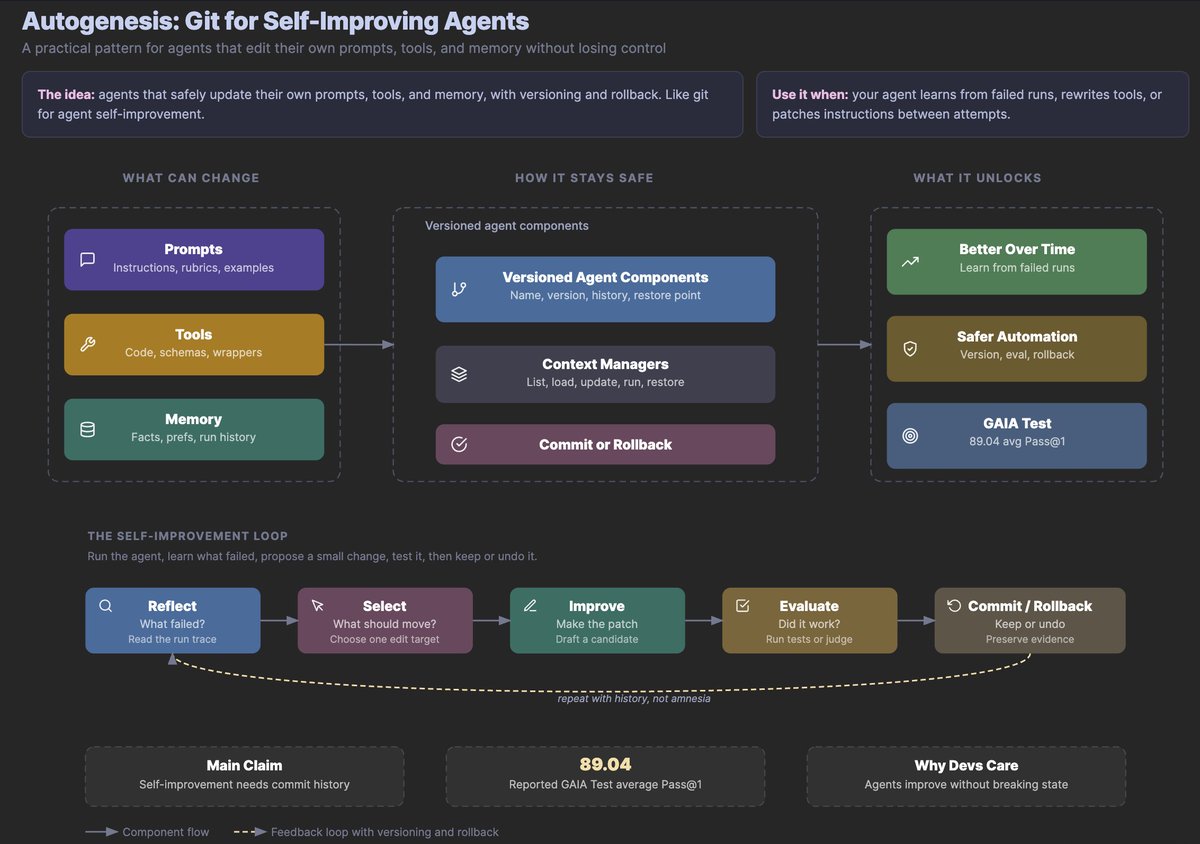

System Architecture and Integration: An AI agent doesn't operate in isolation. Its performance depends on how well it integrates with other systems, the efficiency of data pipelines, and the overall technical infrastructure supporting its operation.

Human-AI Interaction Patterns: The way humans interact with AI systems—their expectations, communication styles, and feedback mechanisms—creates an environmental context that shapes performance outcomes.

Implications for AI Development

This research suggests several important shifts in how we approach AI development:

From Component Optimization to System Design: Rather than focusing exclusively on improving individual AI components, developers need to consider the entire system in which those components operate. This includes data pipelines, user interfaces, and integration points with existing business processes.

Context-Aware Evaluation Metrics: Traditional evaluation metrics that test AI agents in isolation may provide misleading results. New evaluation frameworks need to account for environmental factors and real-world deployment conditions.

Holistic Performance Monitoring: Organizations deploying AI systems should monitor not just the AI agent's performance but the entire ecosystem in which it operates, including data quality trends, user satisfaction, and integration effectiveness.

Practical Applications and Industry Impact

The findings have particular relevance for enterprise AI applications where systems must operate within complex business environments. Companies like Intuit, which develops financial software, understand that AI tools for tax preparation or accounting don't exist in a vacuum—they must integrate with existing workflows, handle sensitive financial data, and provide reliable results in high-stakes situations.

This research validates what many practitioners have observed anecdotally: that the same AI model can perform dramatically differently depending on how it's implemented, what data it receives, and how users interact with it.

The Future of AI Research

This direction of research represents a maturation of the field. As AI transitions from academic curiosity to practical tool, understanding the environmental factors that influence performance becomes increasingly important. Future research will likely focus on:

- Quantifying the impact of various environmental factors

- Developing frameworks for assessing system readiness for AI deployment

- Creating adaptive AI systems that can recognize and respond to environmental conditions

- Establishing best practices for AI system design that accounts for contextual factors

Conclusion

The Intuit AI Research findings remind us that AI doesn't exist in a vacuum. As we continue to develop increasingly sophisticated AI agents, we must pay equal attention to the environments in which they operate. The most advanced AI model can underperform if deployed in suboptimal conditions, while more modest systems can excel when properly integrated into supportive environments.

This holistic approach to AI system design represents the next frontier in artificial intelligence—one that moves beyond model-centric thinking to consider the complete ecosystem in which AI operates. As the field matures, this perspective will likely become standard practice for both researchers and practitioners seeking to build effective, reliable AI systems.

Source: Research shared by Omar Sar (@omarsar0) referencing work from Intuit AI Research.