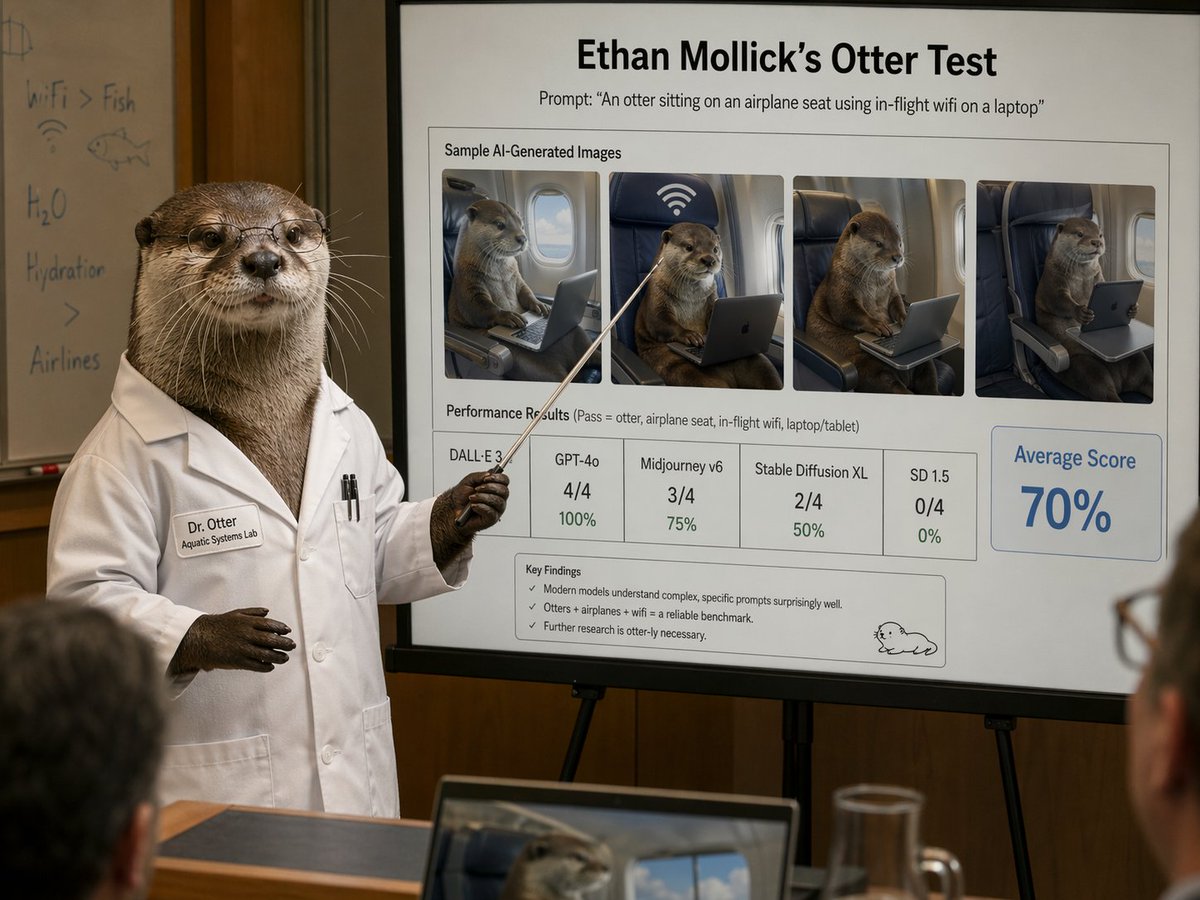

A new capability of OpenAI's latest flagship model has been highlighted by researcher Ethan Mollick: the ChatGPT GPT-5.4 Pro, particularly when using its "Thinking" harness, shows sophisticated proficiency in reading and interpreting scientific papers. The key advancement is its ability to move beyond text analysis to identify which figures in a paper are most critical and to perform visual inspection of those figures to extract meaningful information.

What Happened

Ethan Mollick, a professor at Wharton who extensively tests and writes about AI capabilities, noted on social media that the ChatGPT GPT-5.4 Pro model—especially when utilizing its "Thinking" mode or harness—is "quite good at understanding how to read scientific papers." He specified that this capability is not limited to parsing the text but extends to "figuring out which figures are key and inspecting those visually."

The observation points to a maturation of multimodal reasoning in large language models. While previous models could accept image inputs, this suggests a more integrated, agentic process where the model actively decides which visual elements are central to a document's argument and then analyzes them to inform its overall understanding.

Context: The "Thinking" Harness and GPT-5.4 Pro

The "Thinking" harness refers to a set of techniques or a mode within ChatGPT that allows the model to engage in longer, more chain-of-thought style reasoning before delivering a final answer. It is designed for complex tasks that require planning, step-by-step analysis, or deep research.

GPT-5.4 Pro is the current high-tier offering from OpenAI, released in late 2025. It builds upon the GPT-5 architecture with improvements in reasoning, accuracy, and multimodal processing. A core focus of the GPT-5 series has been enhancing the model's ability to handle long-context, multi-format documents reliably.

What This Means in Practice

For researchers, students, and analysts, this capability could significantly streamline literature reviews. Instead of simply summarizing text, the AI could:

- Identify the central experimental result in a biology paper by pinpointing the key graph.

- Extract quantitative data from a plotted chart in an economics preprint.

- Compare methodological setups between papers based on their diagrams.

This moves AI from being a text summarizer to an active research assistant capable of navigating the full information payload of modern academic documents, where data is often encapsulated in visuals.

gentic.news Analysis

This observed capability is a direct continuation of the multimodal arms race among frontier AI labs. OpenAI's approach with the "Thinking" harness appears to be creating a more deliberate, recursive analysis loop for the model, which is particularly effective for dense, information-rich formats like scientific literature. This isn't just about vision-language understanding; it's about strategic information prioritization—a skill essential for true comprehension.

The development aligns with a clear trend we've been tracking: the evolution of AI from a conversational tool to a reasoning and research engine. In November 2025, we covered DeepSeek's release of their R1 model, which scored highly on the SWE-Bench coding benchmark, emphasizing its planning and reasoning abilities. Similarly, Anthropic's Claude 3.5 Sonnet, released earlier in 2025, set a high bar for document understanding. OpenAI's GPT-5.4 Pro with "Thinking" seems to be a competitive response, focusing the model's enhanced reasoning power on the specific, valuable problem of academic paper analysis.

For practitioners, the key takeaway is the growing importance of evaluation on compound, real-world tasks rather than narrow benchmarks. A model's score on a standard VQA (Visual Question Answering) dataset is less indicative of its utility than its observed performance on a holistic task like "understand this paper." As these models become more agentic, testing their workflow and strategic decision-making—like choosing which figure to analyze—becomes as important as testing their final output accuracy.

Frequently Asked Questions

What is the ChatGPT "Thinking" harness?

The "Thinking" harness is a mode or methodology within ChatGPT that encourages the model to engage in extended, step-by-step reasoning. It often manifests as the model "thinking out loud" in a dedicated text space before providing a final, concise answer. It is designed for complex problems in mathematics, coding, strategic analysis, and, as highlighted here, deep document comprehension.

How is this different from previous AI that could read PDFs?

Earlier AI document readers primarily performed optical character recognition (OCR) and then processed the extracted text. Current multimodal models like GPT-5.4 Pro ingest the entire document as a unified input—text and images together. The new capability involves active visual reasoning: the model assesses the relative importance of different figures and performs specific analysis on them, integrating that visual insight with the textual context.

Can GPT-5.4 Pro extract raw data from graphs in papers?

Based on the description of "inspecting those visually," it likely can interpret graphs, charts, and diagrams to describe trends, compare values, and understand methodological setups. However, precise extraction of raw numerical data points from a pixel-based image (digitization) remains a challenging sub-task, and the accuracy would depend on the clarity and complexity of the figure.

Is this feature available to all ChatGPT users?

The GPT-5.4 Pro model is typically available to ChatGPT Plus, Team, and Enterprise subscribers. The effectiveness of the "Thinking" behavior may vary based on how a user prompts the model and the specific task. It represents an emergent capability of the underlying model that is most reliably activated for complex, multi-step queries.