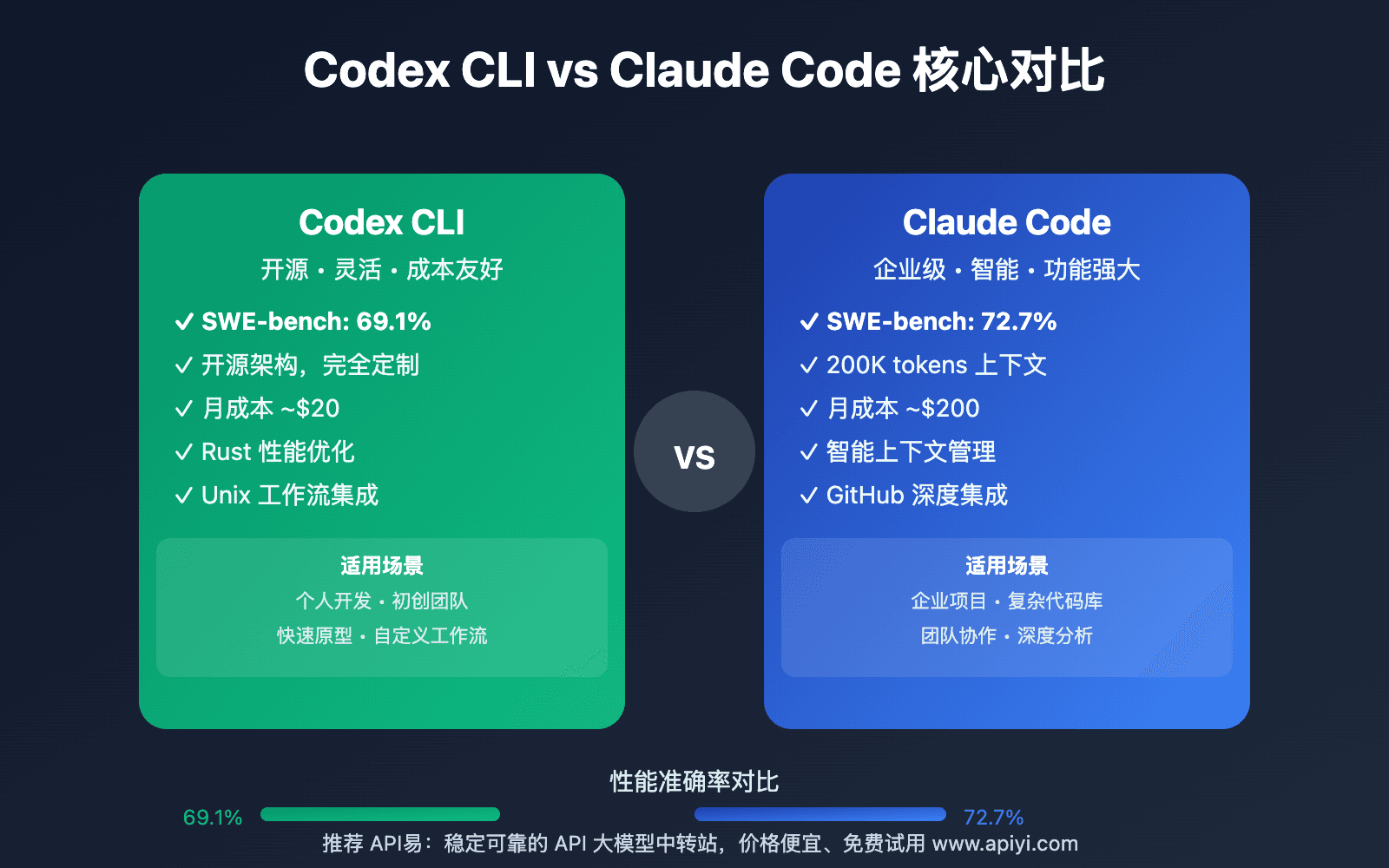

A developer on Hacker News recently mentioned their team is seeing "slightly better" results with Codex compared to Claude Code. This isn't the first time we've heard these comparisons—and it won't be the last. But here's what matters: you shouldn't switch tools based on anecdotal evidence.

The Reality of AI Coding Comparisons

Every development team has different needs. What works "slightly better" for one team's React codebase might perform worse for another team's Python data pipeline. The Hacker News commenter acknowledges this: "If you have a good workflow with CC I wouldn't switch."

This aligns with what we've seen in our coverage of Claude Code's evolution. The tool has been mentioned in 540 articles in our database, with 68 articles just this week—showing intense developer interest and rapid iteration.

How to Actually Compare Coding Assistants

Instead of taking someone else's word, create your own benchmarks. Here's how:

1. Define Your Success Metrics

What matters for YOUR workflow? Common benchmarks include:

- Code acceptance rate: How often do you accept the AI's suggestions?

- Time to first correct solution: How quickly does it produce working code?

- Multi-file understanding: How well does it navigate your codebase structure?

- Debugging accuracy: How effectively does it identify and fix bugs?

2. Create a Standardized Test Suite

Build a small but representative sample of tasks from your actual work:

# Example test structure

claude-code-tests/

├── refactor/

│ ├── legacy_function.js # Needs modernization

│ └── expected_output.js # Target implementation

├── debug/

│ ├── broken_api_endpoint.py

│ └── error_log.txt

└── implement/

├── spec.md # Feature requirements

└── existing_codebase/ # Context files

3. Test Both Tools Side-by-Side

For each task, use the same prompt format and context. Track:

- Number of iterations needed

- Total time spent

- Whether the final solution works

- Any manual corrections required

4. Consider Your Existing Integration

Claude Code's strength isn't just the model—it's the ecosystem. Before switching, evaluate:

- MCP servers: You've likely customized your setup with specific MCP servers (like the new Gemini Flash integration released April 12)

- Workflow automation: Your existing CLAUDE.md files and agent configurations

- Team knowledge: The collective understanding of how to prompt Claude Code effectively

When a Switch Might Actually Make Sense

Consider testing alternatives if:

- Your primary language isn't well-supported by Claude Code's current capabilities

- You're starting a new project with no existing workflow investment

- You've identified specific, repeatable failure patterns that another tool handles better

But remember: switching has real costs. You'll need to:

- Rebuild your prompt library

- Learn new tooling patterns

- Potentially lose access to Claude-specific features (like the AI Performance Guardrails system released April 11)

The Claude Code Advantage You Might Overlook

Recent developments show Claude Code isn't standing still:

- April 13: The community-driven best practices repo hit 19.7K stars with 84 production tips

- April 12: New MCP server integration allows using Gemini Flash for file reading

- April 11: Onboarding workflow that reduces codebase understanding time from weeks to 4 hours

These ecosystem advantages compound over time. A tool that's "slightly better" today might fall behind as the ecosystem evolves.

Try This Benchmark Today

Pick ONE task from your backlog this week. Run it through both Claude Code and your alternative (Codex, Cursor, etc.). Use this prompt template for consistency:

## Task: [Brief description]

## Context:

[Relevant code snippets or file paths]

## Requirements:

1. [Specific requirement 1]

2. [Specific requirement 2]

## Constraints:

- [Constraint 1]

- [Constraint 2]

Track your results. Share them with your team. Make data-driven decisions, not hype-driven ones.

gentic.news Analysis

This discussion reflects the maturing AI coding assistant market where developers are moving beyond initial excitement to practical evaluation. The trend data shows Claude Code appearing in 68 articles this week—indicating both intense scrutiny and rapid evolution.

The comparison with Codex 5.3 (mentioned in 36 prior articles) is particularly relevant given OpenAI's established presence in code generation through GitHub Copilot. However, Claude Code's differentiation through its agentic capabilities and MCP ecosystem (referenced in 36 sources about Model Context Protocol integration) creates a different value proposition.

This follows recent developments where Claude Code has been expanding its capabilities through both Anthropic updates (like the April 11 onboarding workflow) and community contributions (the April 13 best practices repo). The tool's integration into professional workflows at companies like Microsoft (mentioned in 5 sources) suggests it's gaining traction in enterprise environments where workflow consistency matters more than marginal performance differences.

As we noted in our April 13 coverage of the Claude Code best practices repo, the community is actively developing and sharing optimization techniques—meaning today's "slightly better" comparison might reverse as more developers master Claude Code's specific capabilities.