Wharton professor and AI researcher Ethan Mollick has offered a nuanced, early take on Google's newly announced Gemma 4 model, praising its on-device performance while casting significant doubt on its suitability for a core frontier of AI development: agentic workflows.

What Happened

In a post on X, Mollick stated he is "impressed by Gemma 4, there’s a lot of power for an on-device model at fast speeds." This aligns with Google's positioning of its Gemma family as a series of open, lightweight models designed to run efficiently on consumer hardware like laptops and phones.

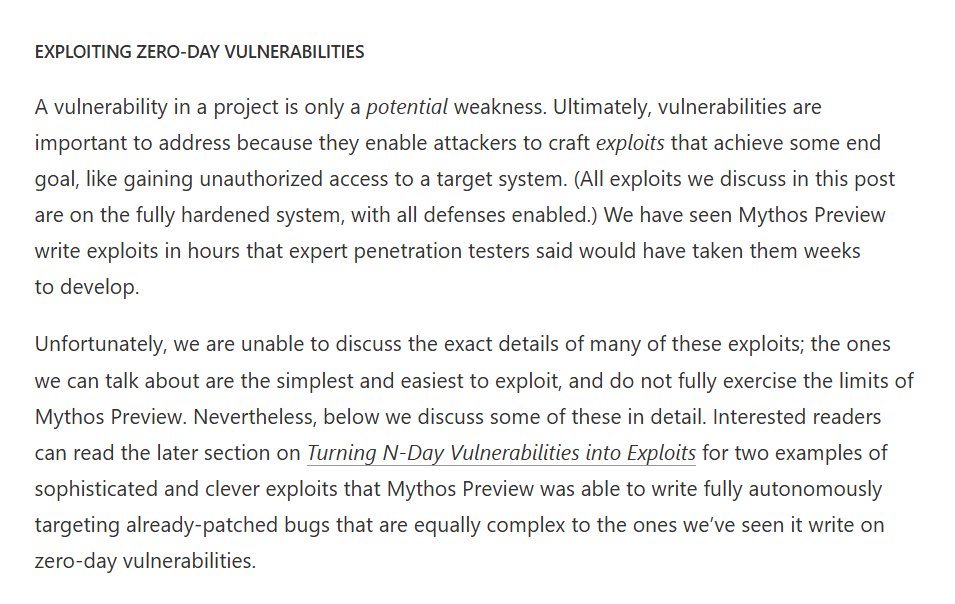

However, Mollick immediately followed with a major caveat: "But I am not convinced you can get real agentic workflows out of a small model on device. So much depends on model judgement, self-correction, and accuracy. Small models are too weak there."

This critique strikes at the heart of a key industry debate. "Agentic workflows" refer to AI systems that can autonomously perform multi-step tasks—like planning a trip, writing and executing code, or conducting research—by breaking them down, using tools (web search, calculators, APIs), and iterating based on results. This requires robust reasoning, planning, and the ability to self-correct errors, capabilities traditionally associated with larger, more powerful models running in the cloud.

Context

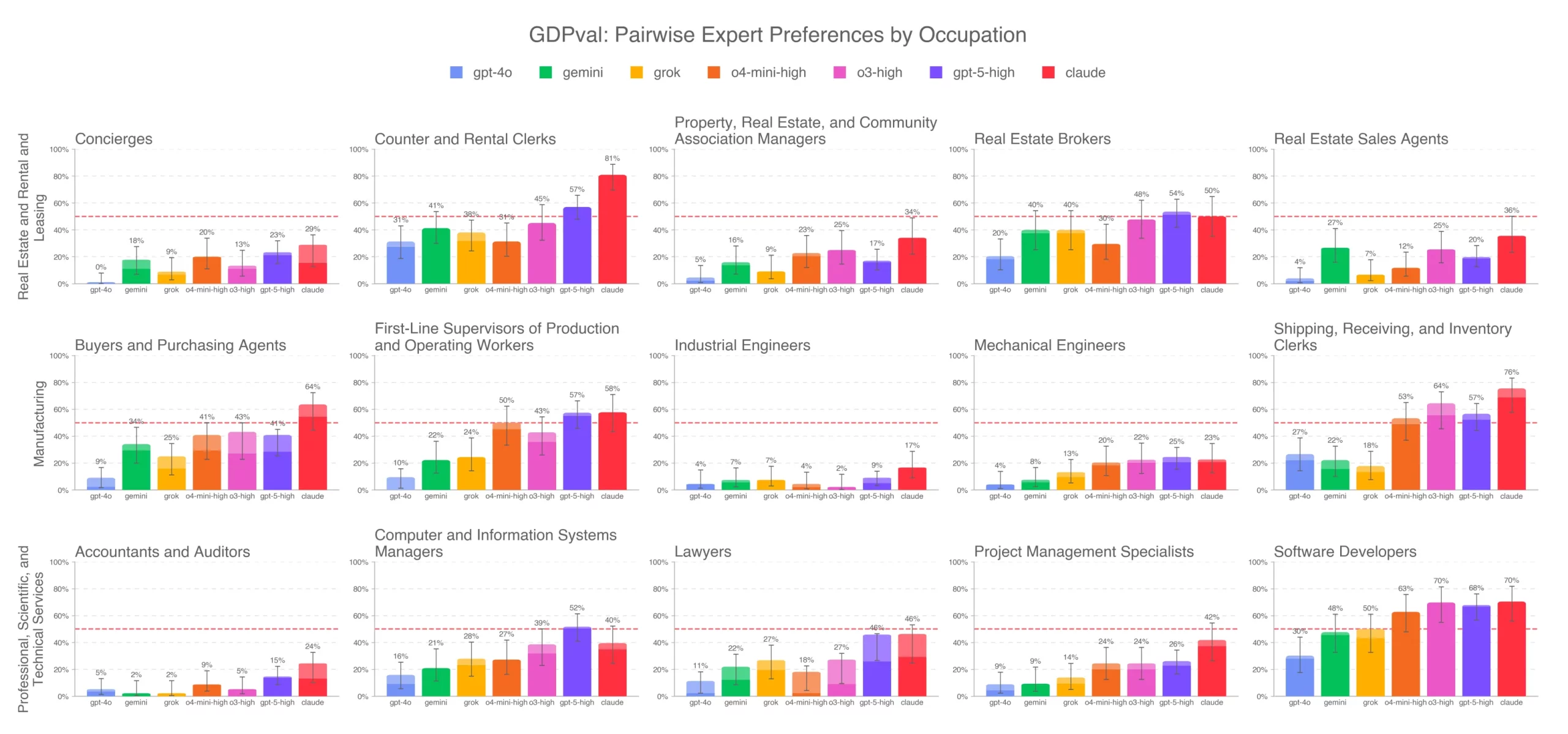

Google's Gemma models are part of a fierce competition to build capable small language models (SLMs) that can run locally. Key players include:

- Meta with its Llama family (Llama 3.1 8B, 70B)

- Microsoft with Phi-3 models

- Mistral AI with Mistral 7B and Mixtral 8x7B

- Apple with its on-device models powering Apple Intelligence

The promise is user privacy, lower latency, and reduced cost. The central technical challenge is closing the performance gap with cloud-based giants like GPT-4o, Claude 3.5 Sonnet, and Google's own Gemini 1.5 Pro.

Mollick's skepticism suggests that while Gemma 4 may excel at fast, single-turn interactions (summarization, Q&A, drafting), the architectural and scale limitations of an on-device model may prevent it from reliably orchestrating the complex, iterative chains of thought and action required for true agency.

gentic.news Analysis

Mollick's critique is a crucial reality check amidst intense hype around small models and on-device AI. It highlights a potential bifurcation in the market: small, fast, private models for discrete tasks versus large, slower, cloud-based models for complex agentic reasoning. This isn't just a technical debate; it has major product implications. Will future "AI assistants" on phones be limited to reactive help, or can they proactively manage projects? Apple's upcoming AI features, heavily reliant on on-device models, will face this exact test.

This skepticism aligns with a trend we've noted: while benchmark scores for SLMs are rising rapidly, real-world evaluations of their reasoning and tool-use capabilities often reveal stark gaps compared to larger models. As we covered in our analysis of the OpenAI o1 model, advanced reasoning and search/planning capabilities have so far been the domain of highly scaled systems. Mollick implies that Gemma 4, for all its speed, may not break this pattern.

The success of Gemma 4 will therefore be measured on two fronts: first, its raw performance on standard benchmarks (MMLU, GSM8K, HumanEval), and second, more importantly, its performance on emerging agentic evaluation frameworks like SWE-Bench, AgentBench, or WebArena. If it falls short on the latter, it will validate Mollick's point that speed and size come at the cost of advanced cognitive capabilities.

Frequently Asked Questions

What are agentic workflows in AI?

Agentic workflows refer to AI systems that can autonomously execute multi-step tasks by planning, using tools (like web browsers or code executors), and iterating based on outcomes. Instead of just answering a single prompt, an agentic AI might be asked to "plan a week-long business trip to Berlin," and would then research flights, book hotels, schedule meetings, and compile an itinerary without further human intervention.

What is Google Gemma 4?

Gemma 4 is the latest iteration in Google's family of open, lightweight large language models. While full specifications are not yet public, it is designed to be efficient enough to run directly on consumer devices ("on-device") like laptops and smartphones, offering fast inference speeds and enhanced privacy by not requiring data to be sent to the cloud.

Why are small models considered weak for agentic work?

Small models (typically under 70 billion parameters) often struggle with the complex chain-of-thought reasoning, long-horizon planning, and robust self-correction required for agentic workflows. They are more prone to reasoning errors, getting stuck in loops, or failing to properly use external tools, which can cause multi-step tasks to fail. Larger models have generally demonstrated superior performance in these areas.

What is the main advantage of on-device AI models?

The primary advantages are speed (no network latency), privacy (user data never leaves the device), cost (no API fees), and availability (functionality without an internet connection). This makes them ideal for frequent, simple tasks where immediate response and data security are priorities.