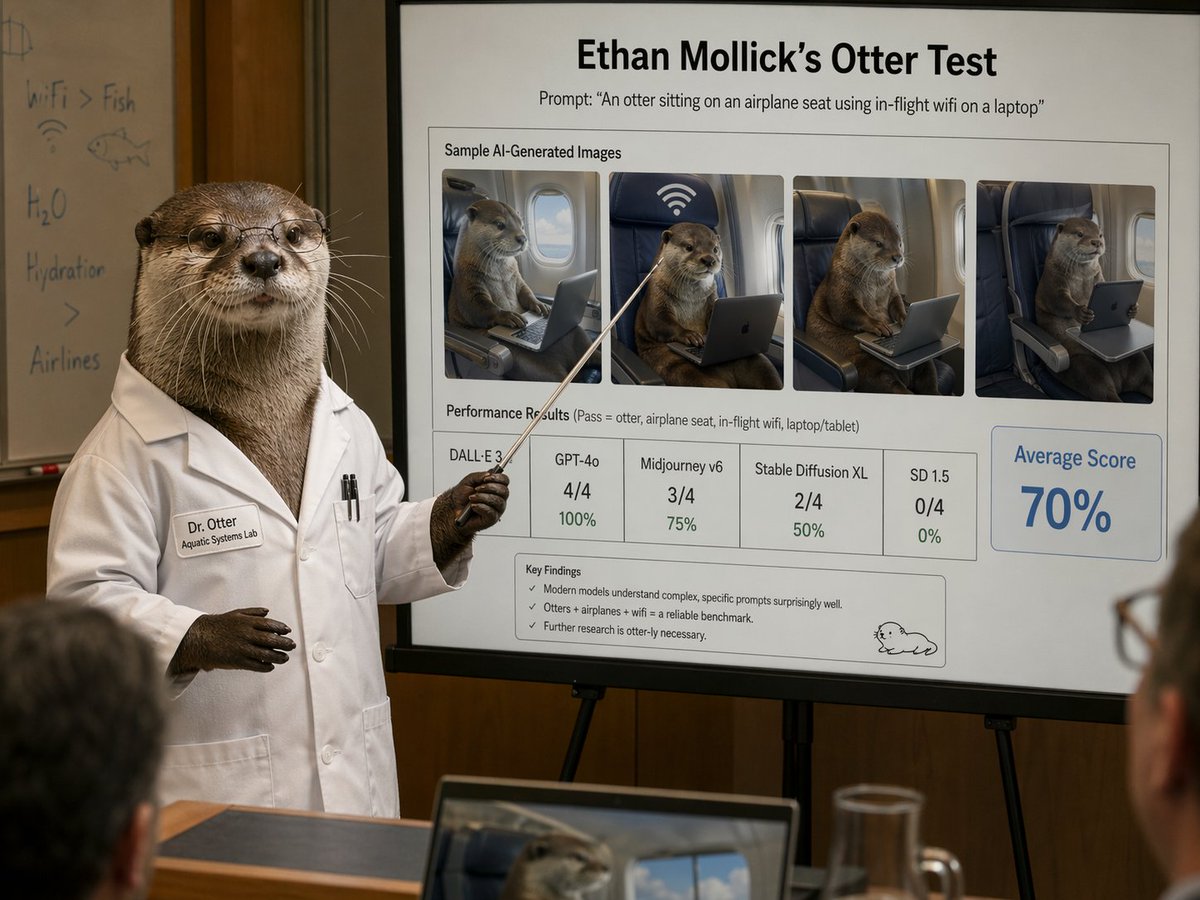

A new analysis of OpenAI's GPT-5.4 reveals the model now matches or exceeds human expert performance on professional tasks 82% of the time, according to updated data from the GDPval benchmark shared by researcher Ethan Mollick. This milestone represents one of the most significant jumps in AI capability since the emergence of large language models and suggests we may be approaching a tipping point where AI becomes a default collaborator rather than just an assistant in professional settings.

The GDPval Benchmark: Measuring Real-World Competence

The GDPval (General Domain Professional Validation) benchmark represents a sophisticated evaluation framework designed to test AI systems on tasks that mirror real professional work. Unlike simpler academic benchmarks, GDPval presents models with complex, multi-step assignments that require domain knowledge, reasoning, and practical application—the kind of work typically performed by lawyers, consultants, analysts, researchers, and other knowledge professionals.

According to Mollick's updated analysis, GPT-5.4's performance represents a substantial improvement over previous models. While exact comparison data wasn't provided in the tweet, the 82% human-parity rate suggests the model has crossed a critical threshold where it can reliably produce work that experts judge as equivalent to or better than human output in the majority of professional contexts.

The Productivity Implications: Saving Hours on Complex Tasks

Perhaps even more striking than the performance metric is the productivity implication Mollick highlights. He calculates that for a typical 7-hour professional task, even accounting for failure rates and the need for human verification of results, AI assistance would save an average of 4 hours and 38 minutes. This represents a 66% reduction in human effort for complex work.

This calculation acknowledges the practical realities of AI integration—that outputs need checking, that some attempts will fail or require revision, and that human oversight remains essential. Yet even with these constraints, the time savings are dramatic. For organizations, this suggests the potential to either dramatically increase output with the same workforce or reallocate human intelligence to higher-value activities that AI cannot yet perform.

Context: The Accelerating Trajectory of AI Capability

This development follows a pattern of rapid acceleration in AI capabilities over the past two years. When GPT-4 was released in March 2023, it already demonstrated remarkable performance on professional exams and complex reasoning tasks, but still trailed human experts in many domains. The leap to 82% human parity with GPT-5.4 suggests that the gap is closing faster than many anticipated.

The implications extend beyond simple task completion. As AI reaches human parity on professional work, we're likely to see shifts in:

- Education and training: What skills should professionals develop if AI can perform core analytical and creative tasks?

- Work organization: How should teams be structured when AI can handle substantial portions of complex work?

- Quality standards: What constitutes "good enough" when AI can produce expert-level work in minutes rather than hours?

The Verification Challenge: Trust But Verify

Mollick's analysis importantly includes the caveat that results need checking—a recognition that even expert-level AI outputs can contain errors, biases, or subtle flaws. This creates a new professional competency: AI output verification. Professionals will need to develop skills in efficiently validating AI work, recognizing its limitations, and knowing when human intervention is necessary.

This verification requirement also suggests that the most effective human-AI collaborations will involve a division of labor where AI handles the bulk of content generation and analysis, while humans focus on strategic direction, quality control, ethical considerations, and creative synthesis that goes beyond the AI's capabilities.

Economic and Organizational Implications

The productivity gains suggested by this analysis could have profound economic implications. If knowledge workers can accomplish in 2-3 hours what previously took 7, we might see:

- Reduced costs for professional services as efficiency improves

- Increased accessibility of expert-level work for smaller organizations

- Pressure on traditional business models built on billable hours

- New competitive dynamics as AI capability becomes a key differentiator

Organizations that successfully integrate these AI capabilities will likely gain significant advantages over those that don't, potentially leading to a new wave of productivity-driven growth in knowledge-intensive sectors.

Looking Forward: The Human Role in an AI-Augmented Workplace

As AI reaches human parity on more professional tasks, the question becomes not whether AI will replace humans, but how humans will work alongside increasingly capable AI systems. The most valuable professionals of the near future may be those who excel at:

- AI direction and prompting: Effectively guiding AI to produce optimal results

- Synthesis and integration: Combining AI outputs with human insight and context

- Ethical oversight: Ensuring AI work aligns with organizational values and societal norms

- Creative breakthrough: Moving beyond what AI can do to what only humans can imagine

Mollick's updated analysis, based on the GDPval benchmark for GPT-5.4, suggests we're closer to this future than many realized. The 82% human parity figure represents both an extraordinary technological achievement and a challenge to reimagine how professional work gets done.

Source: Ethan Mollick (@emollick) on X/Twitter, analyzing GDPval benchmark results for GPT-5.4