In the terrifying first 72 hours after a child disappears, law enforcement faces a race against time with fragmented data and limited predictive tools. A new AI system called Guardian, detailed in a March 2026 arXiv preprint, represents a potential breakthrough in search-and-rescue technology by combining three distinct AI approaches into a cohesive decision-support framework.

The Critical Problem: Fragmented Data in Time-Sensitive Operations

According to the research paper "Interpretable Markov-Based Spatiotemporal Risk Surfaces for Missing-Child Search Planning with Reinforcement Learning and LLM-Based Quality Assurance," law enforcement agencies typically work with heterogeneous, unstructured case documents that lack standardization. This fragmentation creates significant barriers to rapid, effective search planning during the most critical period for recovery.

Guardian addresses this challenge through an end-to-end pipeline that converts these disparate data sources into a schema-aligned spatiotemporal representation. The system enriches cases with geocoding and transportation context, then generates probabilistic search products spanning the crucial 0-72 hour window.

The Three-Layer Architecture: From Prediction to Action

Layer 1: Interpretable Markov Chains

The foundation of Guardian's predictive capability is a Markov chain model designed specifically for interpretability. Unlike black-box neural networks, this sparse model incorporates several psychologically and geographically informed parameters:

- Road accessibility costs reflecting actual travel constraints

- Seclusion preferences accounting for where children might seek shelter

- Corridor bias modeling movement along transportation routes

- Separate day/night parameterizations recognizing behavioral differences

"The Markov chain's interpretability is crucial for law enforcement adoption," the researchers note. "Officers need to understand why the system suggests certain areas, not just receive predictions."

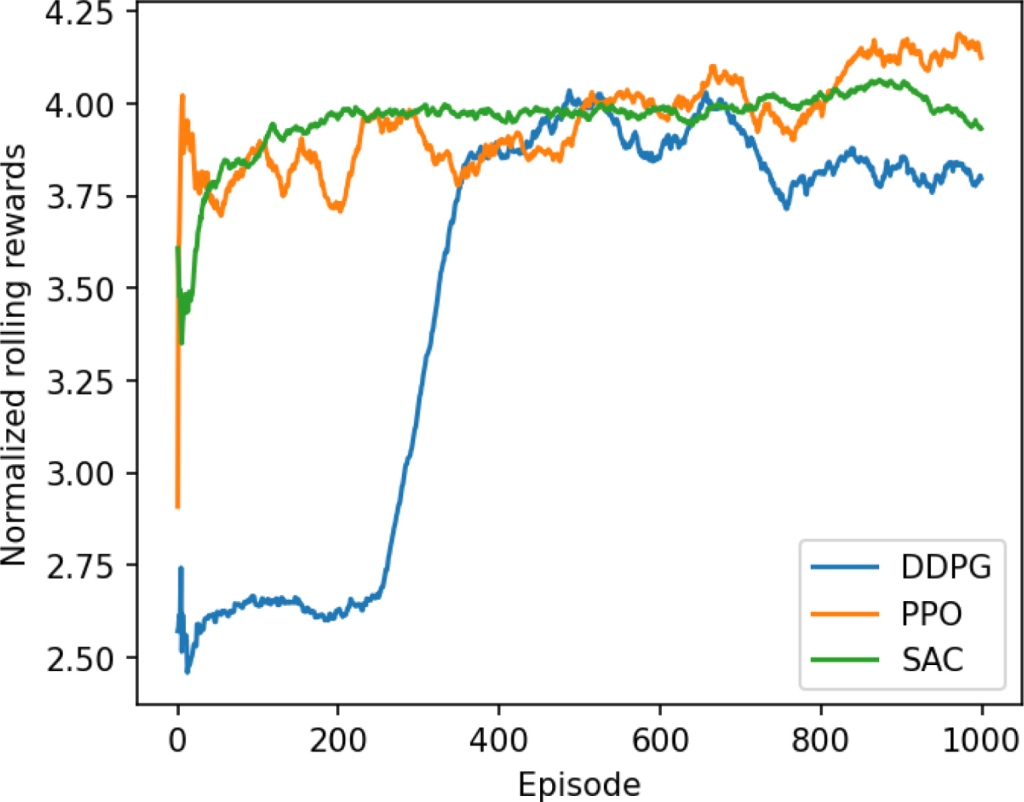

Layer 2: Reinforcement Learning for Operational Planning

The Markov chain produces probability distributions of where a child might be, but these don't directly translate into search plans. This is where reinforcement learning (RL) comes in. The second layer transforms probabilistic predictions into actionable search strategies by optimizing resource allocation across geographic zones.

The RL component considers operational constraints like:

- Available search personnel and equipment

- Time limitations for different search methods

- Terrain difficulties and accessibility

- Changing probabilities as time passes

Layer 3: LLM-Based Quality Assurance

In a novel application of large language models, Guardian's third layer performs post hoc validation of the RL-generated search plans before release. The LLM evaluates plans against established search-and-rescue protocols, checks for logical consistency, and identifies potential failure modes that might have been overlooked.

This quality assurance step addresses a critical concern in high-stakes AI applications: ensuring that automated systems don't produce dangerous or illogical recommendations that could waste precious time or resources.

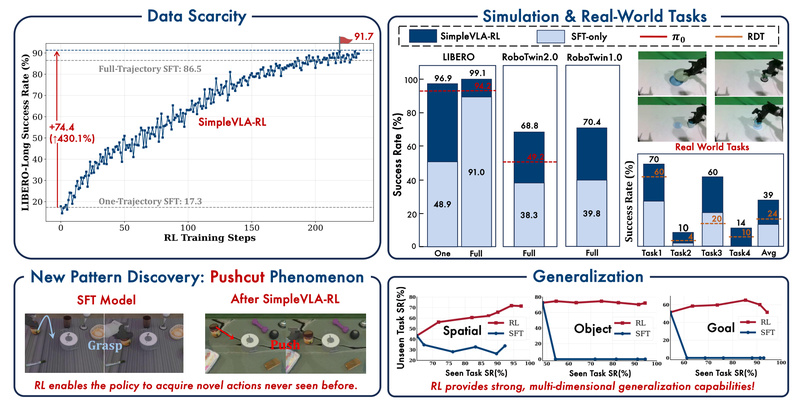

Testing and Results: A Synthetic Case Study

The researchers tested Guardian using a synthetic but realistic case study, reporting quantitative outputs across 24-, 48-, and 72-hour horizons. Their analysis included sensitivity testing, failure mode identification, and tradeoff evaluations between different search strategies.

Results demonstrated that the three-layer architecture produces "interpretable priors for zone optimization and human review"—meaning the system provides both specific recommendations and the reasoning behind them, allowing human operators to make informed final decisions.

Implementation Challenges and Ethical Considerations

While Guardian represents significant technical advancement, the paper acknowledges several implementation challenges:

- Data standardization across different jurisdictions

- Integration with existing law enforcement systems

- Training requirements for officers using the system

- Validation against real-world outcomes (difficult due to privacy concerns)

Ethical considerations are particularly important given the sensitive nature of missing-child cases. The researchers emphasize that Guardian is designed as a decision-support tool, not an autonomous decision-maker. Human oversight remains essential, with the system providing recommendations that experienced investigators can accept, modify, or reject based on their expertise.

The Broader Context: AI in Public Safety

Guardian arrives amid growing interest in applying AI to public safety challenges. Recent arXiv publications have explored related areas including multi-level meta-reinforcement learning frameworks (March 11, 2026) and verifiable reasoning systems for LLM-based recommendations (March 10, 2026).

What distinguishes Guardian is its focus on interpretability and human-AI collaboration in life-or-death situations. By combining the strengths of three different AI approaches—the transparency of Markov models, the optimization capability of reinforcement learning, and the reasoning validation of LLMs—the system aims to enhance rather than replace human judgment.

Future Directions and Implications

The Guardian system points toward several important developments in applied AI:

- Hybrid AI architectures that combine multiple techniques for complementary strengths

- Explainable AI in high-stakes domains where trust and understanding are essential

- Validation frameworks for AI systems in safety-critical applications

- Domain-specific adaptations of general AI techniques like LLMs

As the researchers note, future work will need to address scalability across different geographic regions, adaptation to various types of missing-person cases, and integration with real-time data sources like traffic cameras and mobile device locations (with appropriate privacy protections).

Source: arXiv preprint "Interpretable Markov-Based Spatiotemporal Risk Surfaces for Missing-Child Search Planning with Reinforcement Learning and LLM-Based Quality Assurance" (March 9, 2026)