A new research model called LPM 1.0 claims to solve what developers call the "performance trilemma" for AI avatars—the challenge of maintaining consistent character identity, generating infinite-length content, and operating in real-time. The 17-billion-parameter real-time diffusion model reportedly achieves over 60,000 seconds (approximately 16.7 hours) of consistent character performance in conversational videos.

What the Model Does

LPM 1.0 (likely standing for "Long-form Performance Model") is specifically designed for generating conversational videos where AI avatars maintain stable identity throughout extended interactions. Unlike previous video generation models that struggle with character consistency beyond short clips, LPM 1.0 claims to handle "infinite-length" conversations while preserving the same visual identity of the generated avatar.

The core innovation appears to be solving what the researchers term the "performance trilemma"—simultaneously achieving:

- Stable identity: The same character appearance throughout the video

- Infinite length: No practical limit on conversation duration

- Real-time generation: Fast enough for interactive applications

Technical Architecture

While the tweet announcement doesn't provide detailed architectural specifics, several key technical aspects can be inferred:

Model Size: At 17 billion parameters, LPM 1.0 sits in the mid-range of contemporary diffusion models—larger than many specialized video models but smaller than foundation models like GPT-4 (reportedly ~1.7 trillion parameters). This suggests a balance between capability and computational efficiency.

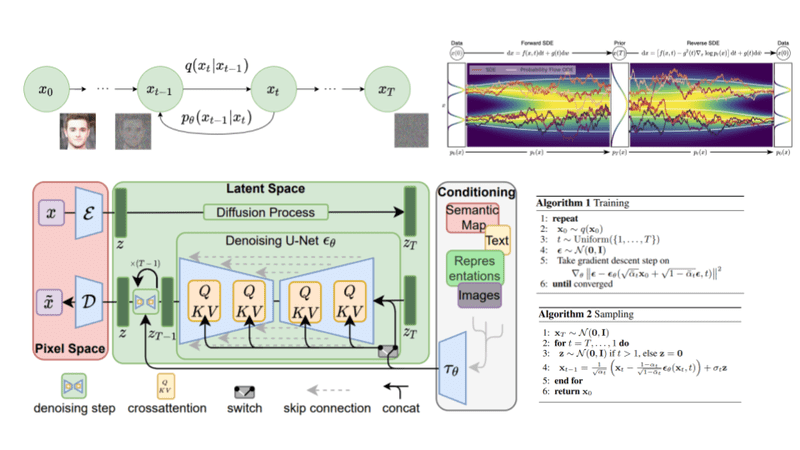

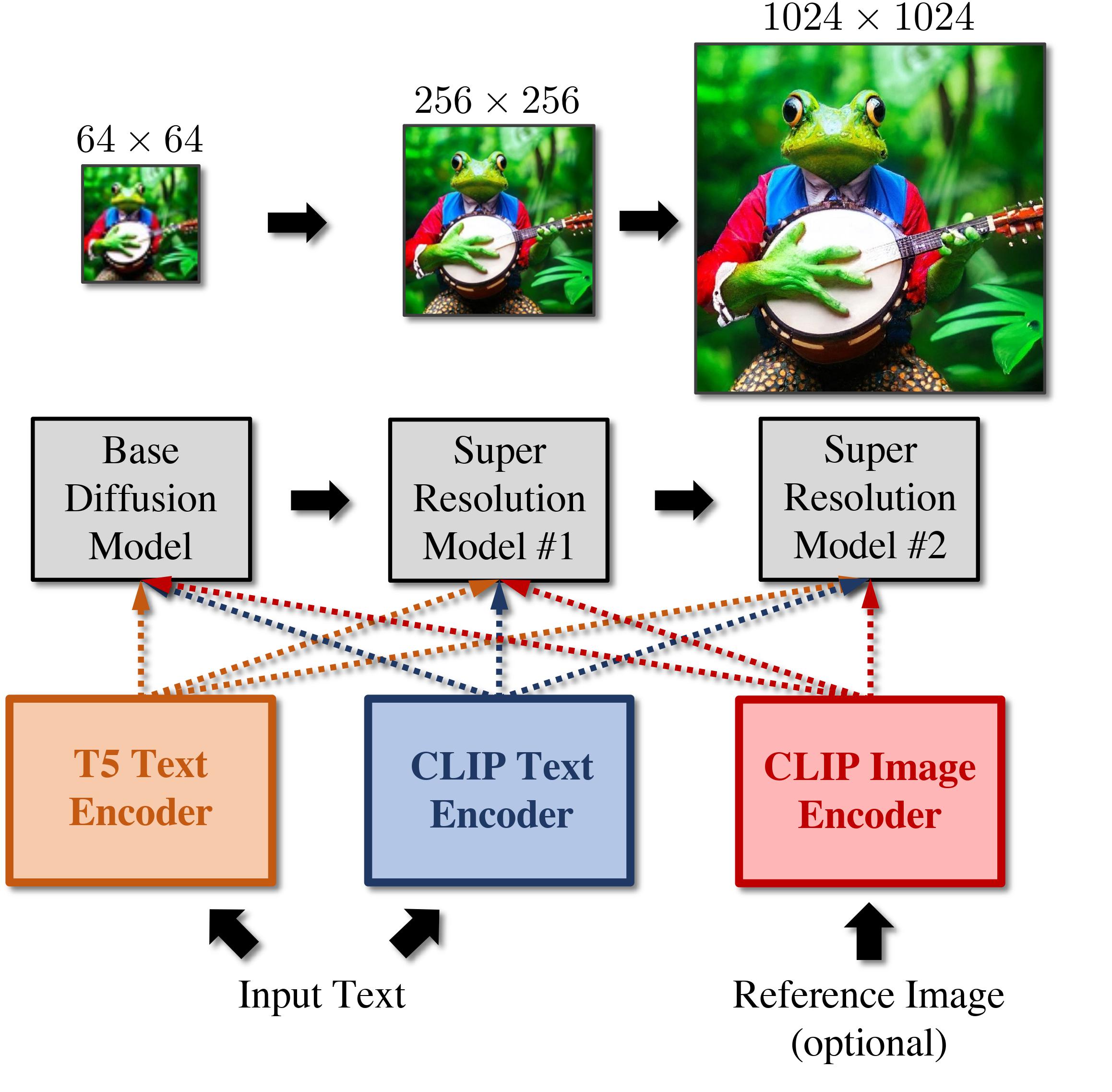

Diffusion Approach: The model uses diffusion techniques, which have become standard for high-quality image and video generation. The "real-time" designation suggests optimizations for inference speed, potentially through techniques like latent space diffusion, distillation, or specialized attention mechanisms.

Consistency Mechanism: Maintaining stable identity across 60,000+ seconds requires novel approaches to temporal consistency. This likely involves some form of memory mechanism, cross-frame attention, or identity embedding that persists throughout generation.

Performance Claims

The primary metric highlighted is 60,000 seconds of consistent character performance—equivalent to approximately 16.7 hours of continuous video. This represents a significant leap over previous video generation models, which typically struggle with consistency beyond minutes.

For context:

- Most current video diffusion models maintain consistency for 30-120 seconds

- State-of-the-art models like Sora from OpenAI reportedly handle up to 60 seconds with high consistency

- LPM 1.0's claimed 60,000 seconds represents a 1,000x improvement in duration

The "real-time" designation suggests the model can generate video at or near playback speed, making it suitable for interactive applications like virtual assistants, AI companions, or educational avatars.

Potential Applications

Virtual Assistants & Customer Service: AI avatars that can maintain consistent appearance throughout extended customer interactions.

Educational Content: Virtual tutors that can deliver hours of consistent instructional video.

Entertainment & Gaming: NPCs with persistent visual identities throughout long gameplay sessions.

Therapeutic Applications: Consistent virtual therapists for extended counseling sessions.

Limitations & Unknowns

The announcement lacks several critical details that would be needed for full technical assessment:

Benchmark Details: No specific metrics beyond the 60,000-second claim, no comparison to established video generation benchmarks.

Training Data: Unknown what data was used to train the 17B-parameter model.

Computational Requirements: No information on inference hardware requirements or training costs.

Video Quality: No samples or objective quality metrics provided in the announcement.

Identity Definition: "Stable identity" isn't quantitatively defined—does it mean pixel-perfect consistency or perceptual similarity?

gentic.news Analysis

This development represents a significant step toward practical, long-form AI avatar applications. The 60,000-second consistency claim, if validated through peer review and independent testing, would mark a paradigm shift in video generation capabilities. Previous approaches to long-form video either sacrificed consistency (resulting in character drift) or required computationally expensive post-processing to maintain identity.

From a technical perspective, achieving this with a 17B-parameter model suggests efficient architectural choices. For comparison, OpenAI's Sora is rumored to be significantly larger, while maintaining much shorter consistency windows. The efficiency gains here could make long-form avatar generation accessible to more developers and applications.

The timing aligns with increasing industry focus on AI avatars and digital humans. This follows Meta's recent advancements with Codec Avatars and NVIDIA's work on Omniverse Avatar—both targeting realistic, consistent digital humans but through different technical approaches. LPM 1.0's diffusion-based method offers an alternative path that may be more scalable for certain applications.

However, the announcement's brevity raises questions. Without published paper, code, or benchmarks, the community cannot verify the claims. The "performance trilemma" framing is useful for understanding the problem space, but we need to see how LPM 1.0 compares quantitatively to existing models on standard video generation benchmarks.

For practitioners, the key question is whether this represents a fundamental architectural breakthrough or an incremental improvement with aggressive marketing. If the former, we should expect to see the techniques incorporated into other video generation models rapidly. If the latter, LPM 1.0 may remain a specialized solution for specific avatar applications.

Frequently Asked Questions

What is LPM 1.0?

LPM 1.0 is a 17-billion-parameter real-time diffusion model designed to generate infinite-length conversational videos with stable character identity. It claims to solve the "performance trilemma" by maintaining consistent avatar appearance across extremely long durations (over 60,000 seconds) while operating in real-time.

How does LPM 1.0 compare to other video generation models?

Based on the limited information available, LPM 1.0 appears specialized for long-form conversational videos with consistent characters, whereas models like Sora, Stable Video Diffusion, and Pika focus on shorter, more varied video generation. The 60,000-second consistency claim represents a significant duration increase over existing models, which typically handle 30-120 seconds of consistent content.

What are the potential applications of LPM 1.0?

Primary applications include virtual assistants, customer service avatars, educational tutors, entertainment characters, and therapeutic companions—any scenario requiring extended interactions with a visually consistent AI avatar. The real-time capability makes it suitable for interactive applications rather than just pre-rendered content.

When will LPM 1.0 be available for developers?

The announcement doesn't specify release timing or availability. Typically, research models like this might be released as papers first, then potentially as open-source implementations or commercial APIs. Developers should watch for the forthcoming technical paper referenced in the tweet for more details on accessibility.