The Problem It Solves

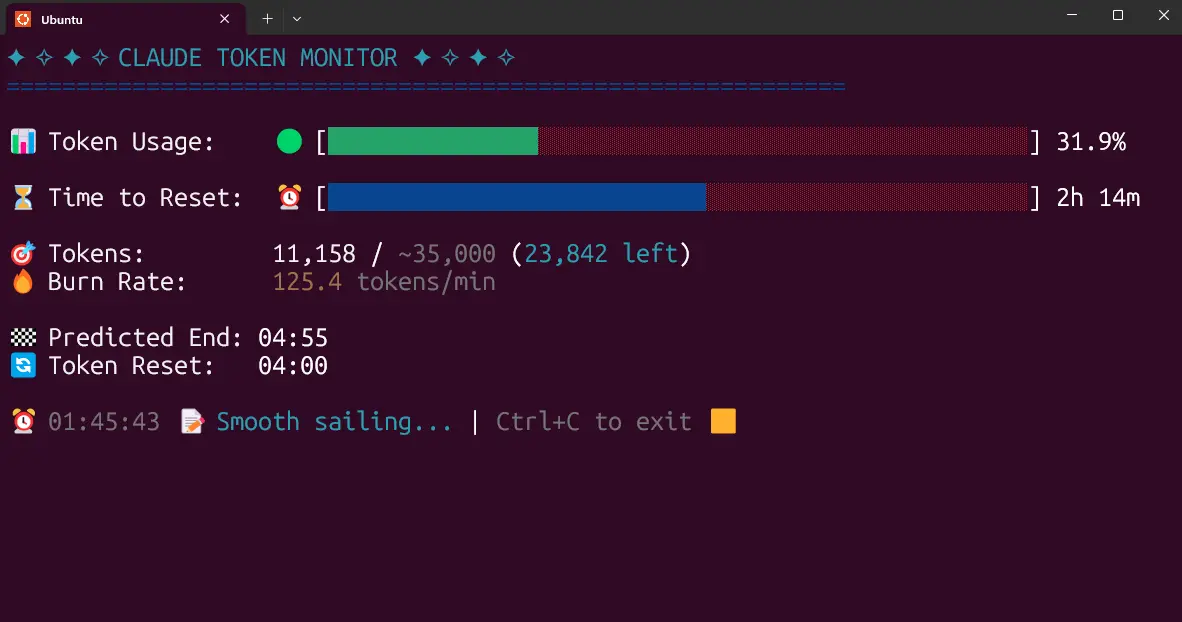

When your Claude Code agent using MCP servers behaves unexpectedly, debugging is a nightmare. Tool calls vanish into stderr, you can't see what arguments were actually sent, and there's no way to reproduce the failure. This is the exact gap mcpscope fills—it's an observability and replay testing layer specifically for MCP servers.

How It Works (Install in 2 Minutes)

mcpscope is a transparent proxy written in Go. Install it, point it at your MCP server, and it intercepts every JSON-RPC message without modifying your server code:

go install github.com/td-02/mcp-observer@latest

mcpscope proxy --server ./your-mcp-server --db traces.db

Open http://localhost:4444 and you get a live dashboard showing every tool call, latency percentiles (P50/P95/P99), and error timelines.

For Python MCP servers (common with Claude Code):

mcpscope proxy -- uv run server.py

For HTTP MCP servers:

mcpscope proxy --transport http --upstream-url http://127.0.0.1:8080

The Killer Feature: Replay Testing

This is what changes everything for Claude Code workflows. Once mcpscope records production traces, you can export and replay them in CI:

# Export real production traces

mcpscope export --config ./mcpscope.example.json --output traces.json --limit 200

# Replay in CI — fail on errors or latency regressions

mcpscope replay --input traces.json --fail-on-error --max-latency-ms 500 -- uv run server.py

Now you can: take a session where Claude Code behaved unexpectedly → export the exact MCP traces → turn them into a reproducible test case. No more "it only happens in production."

Catch Schema Drift Before It Breaks Your Agent

Upstream MCP servers changing their tool schemas silently break Claude Code agents. mcpscope's snapshot/diff workflow catches this:

# Capture baseline

mcpscope snapshot --server ./your-mcp-server --output baseline.json

git add baseline.json && git commit -m "chore: add MCP baseline snapshot"

# On every PR:

mcpscope snapshot --server ./your-mcp-server --output current.json

mcpscope diff baseline.json current.json --exit-code

The --exit-code flag makes it CI-friendly—exits non-zero on breaking changes so your PR check fails before the change reaches your Claude Code agent.

Why This Matters for Claude Code Users

MCP is becoming Claude Code's standard way to connect to external tools (32 sources in our knowledge graph show Claude Code uses MCP). But as recent research reveals, 66% of MCP servers have critical security vulnerabilities. mcpscope gives you visibility into what's actually happening between Claude Code and your MCP servers.

Key features in v0.1.0:

- Live web dashboard with tool call feed and latency views

- Alerts to Slack/PagerDuty/webhooks

- OpenTelemetry export (plugs into Grafana/Jaeger)

- SQLite trace store (local by default, Postgres-ready)

- Workspace + environment scoping (prod vs staging)

- Docker + Docker Compose included

MIT licensed, no telemetry, runs air-gapped. Your tool call data stays local.

Try It This Week

If you're using Claude Code with MCP servers (database connectors, API tools, file systems), install mcpscope and run it against your development server for a day. You'll immediately see:

- Which tools Claude Code calls most frequently

- Latency patterns that might be slowing down your agent

- Any errors that were previously hidden in stderr

Then export those traces and add a replay test to your CI pipeline. This is the observability layer MCP has been missing.