Chinese AI company MiniMax has announced the availability of its M2.7 model on NVIDIA's GPU-accelerated NIM (NVIDIA Inference Microservice) endpoints. The deployment, announced via a company tweet, specifically includes support for the OpenClaw and NemoClaw frameworks, positioning the model within a standardized, high-performance inference ecosystem.

Key Takeaways

- Chinese AI firm MiniMax has made its M2.7 model available through NVIDIA's GPU-accelerated NIM endpoints.

- This deployment includes support for the OpenClaw and NemoClaw frameworks, integrating it into a major AI development ecosystem.

What's New

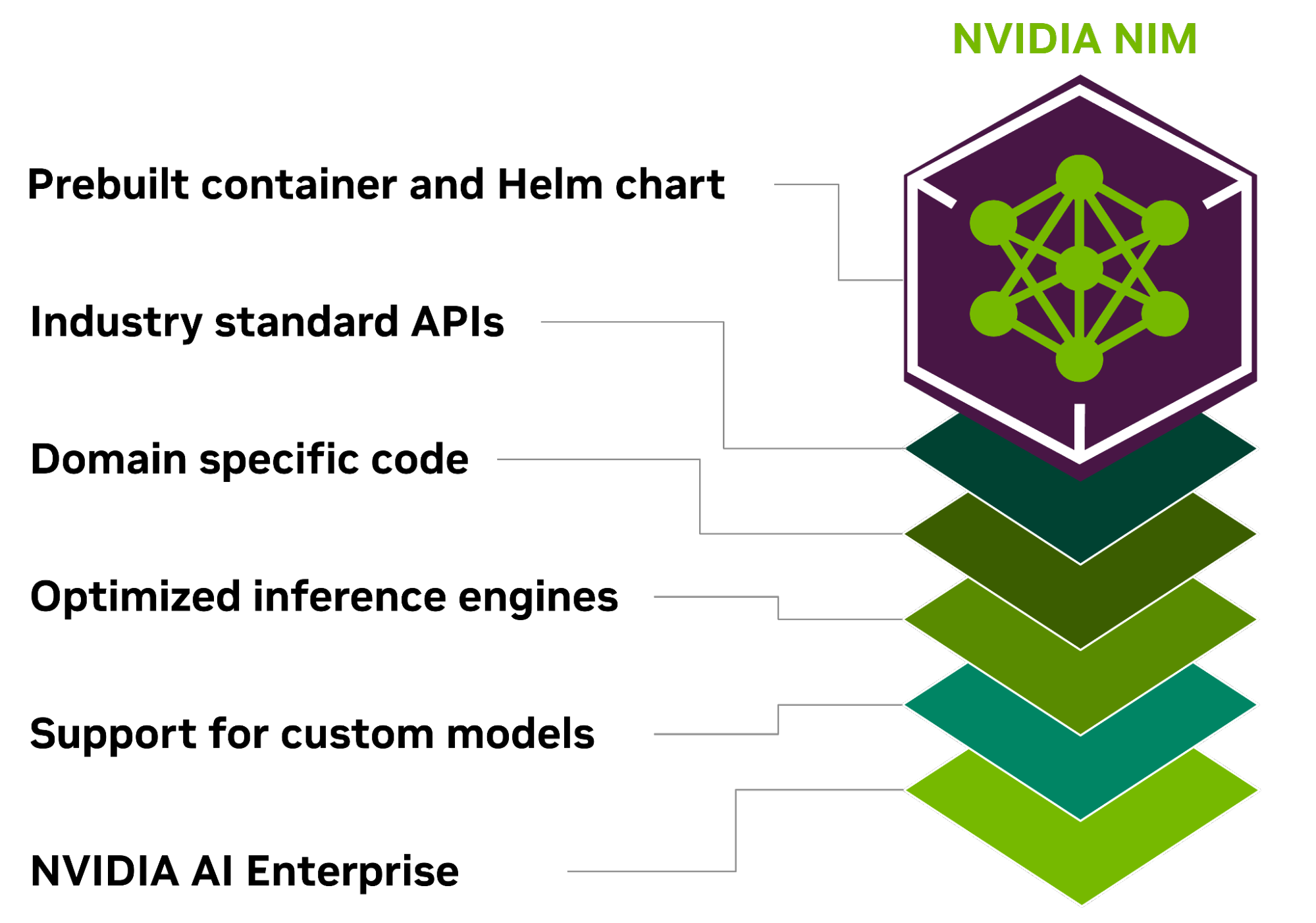

The core announcement is an availability update. Developers can now access and run inference on MiniMax's M2.7 model through NVIDIA's managed NIM endpoints. NVIDIA NIM is a set of cloud-native microservices designed to streamline the deployment of AI models, offering optimized performance on NVIDIA GPUs. The inclusion of support for OpenClaw—an open-source framework for large language model (LLM) inference and serving—and its NVIDIA-optimized counterpart, NemoClaw, is a key technical detail. This means developers using these popular claws for model deployment can integrate the M2.7 model into their stacks more easily.

Technical Details & Access

MiniMax directed users to two resources:

- A technical guide (

https://t.co/XEjpd4jzHl) likely covering integration steps, API specifications, and performance benchmarks for M2.7 on the NIM platform. - A link to start building (

https://t.co/DtI1iBn7GP), which presumably leads to NVIDIA's API catalog or a dedicated landing page for the M2.7 NIM.

The move integrates MiniMax's flagship model into the NVIDIA AI Enterprise software platform's ecosystem. For developers, the primary benefit is operational: they can leverage NVIDIA's infrastructure for scalable, optimized inference without managing the underlying containerization and GPU optimization themselves.

The Competitive & Strategic Context

This is a strategic partnership play. For MiniMax, a Beijing-based unicorn known for its text-to-video and LLM capabilities, gaining a distribution channel on NVIDIA's platform significantly boosts its visibility and accessibility to a global developer base. It represents a push for international adoption beyond its primary Chinese market.

For NVIDIA, adding MiniMax's model to its NIM catalog enriches the ecosystem, offering customers another high-performance LLM option alongside models from other leading AI labs. It reinforces NIM's role as a central hub for enterprise-grade AI inference.

The mention of OpenClaw support is particularly notable. OpenClaw has emerged as a critical open-source tool for LLM deployment, and explicit compatibility signals that MiniMax is prioritizing ease of integration for developers already standardized on this toolkit.

gentic.news Analysis

This deployment is a logical next step in MiniMax's expansion strategy following its massive $600 million funding round in mid-2025, which valued the company at over $4 billion. That capital injection was explicitly earmarked for scaling infrastructure and international growth. Partnering with NVIDIA to offer M2.7 via NIM is a direct execution of that plan, providing a turnkey path to global cloud availability without MiniMax needing to build and market its own inference service from scratch.

The move also aligns with a broader industry trend we covered in our analysis, "NVIDIA NIM Becomes the De Facto App Store for Enterprise AI Models," where major AI developers are using NVIDIA's platform as a primary distribution layer. By joining this ecosystem, MiniMax is competing for mindshare and usage alongside other NIM-hosted models, betting that its performance—particularly in multilingual and reasoning tasks as showcased in its research—will attract developers.

However, the announcement is light on new technical specifics about M2.7 itself. We have not seen new benchmark scores or a detailed comparison against the current frontier models available on similar platforms, like Claude 3.5 Sonnet or GPT-4o. The real test will be in adoption metrics and independent benchmarking now that the model is more readily accessible. For practitioners, the key question remains whether M2.7's performance-per-cost profile on the NIM platform makes it a compelling alternative to the established leaders in the space.

Frequently Asked Questions

What is MiniMax M2.7?

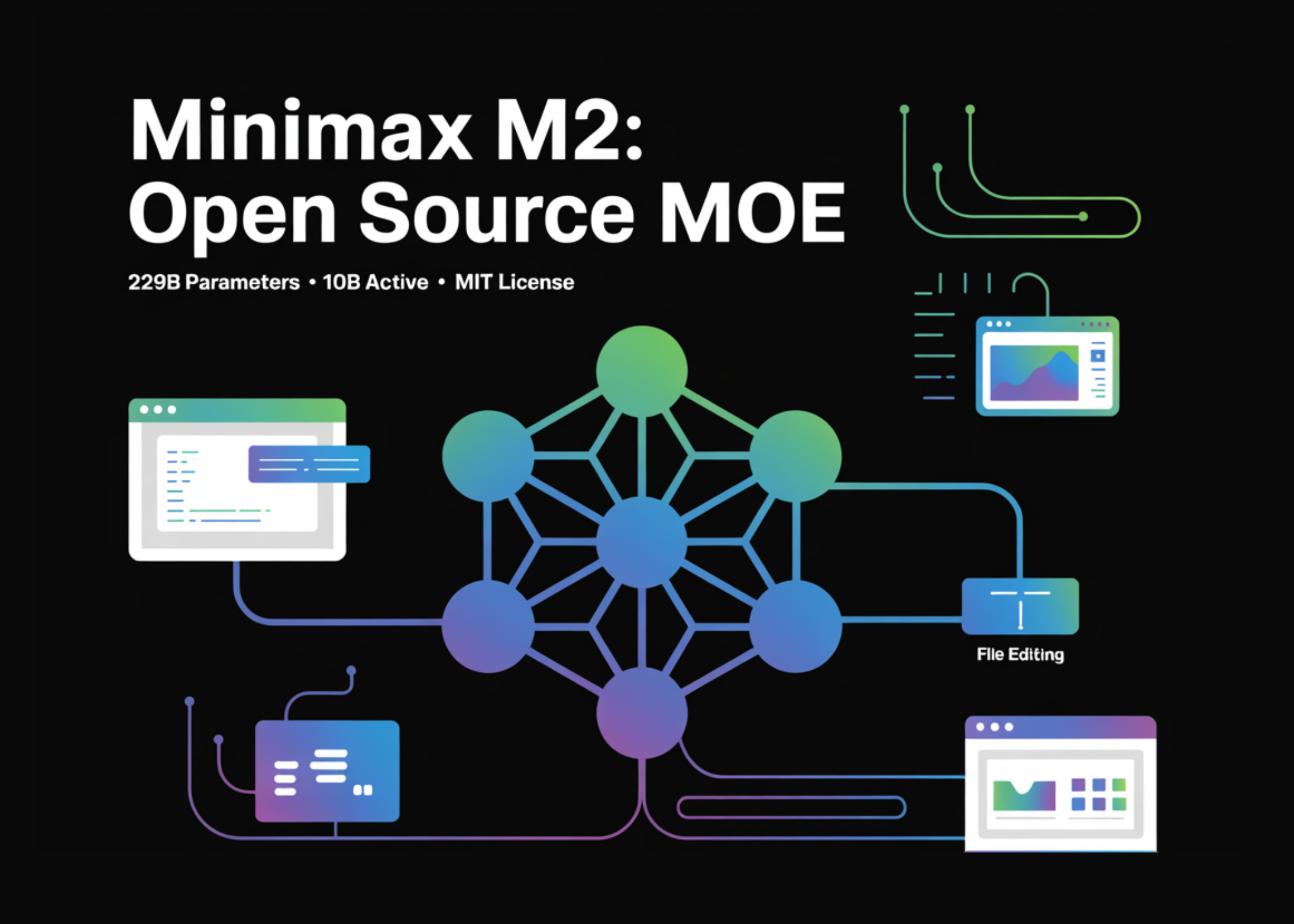

MiniMax M2.7 is a large language model developed by the Chinese AI company MiniMax. It is the successor to their previous M2 series models and is known for strong performance in reasoning and multilingual tasks. It is now deployable via NVIDIA's inference microservices.

What are NVIDIA NIM endpoints?

NVIDIA NIM (NVIDIA Inference Microservice) endpoints are cloud-native, optimized containers that allow developers to deploy AI models quickly on NVIDIA GPU infrastructure. They handle the complexity of model optimization, scaling, and management, offering a simplified API for inference.

What is OpenClaw and why does support for it matter?

OpenClaw is an open-source framework for serving and deploying large language models. Support for OpenClaw means developers who have already built their inference pipelines using this toolkit can integrate the MiniMax M2.7 model with minimal changes, significantly lowering the barrier to adoption.

How can I access and use the MiniMax M2.7 model on NVIDIA NIM?

You can access the model by visiting the NVIDIA API NIM catalog (link provided in MiniMax's announcement). You will likely need an NVIDIA cloud account or enterprise license. The provided technical guide should detail the API calls, authentication, and pricing structure for using the M2.7 endpoint.