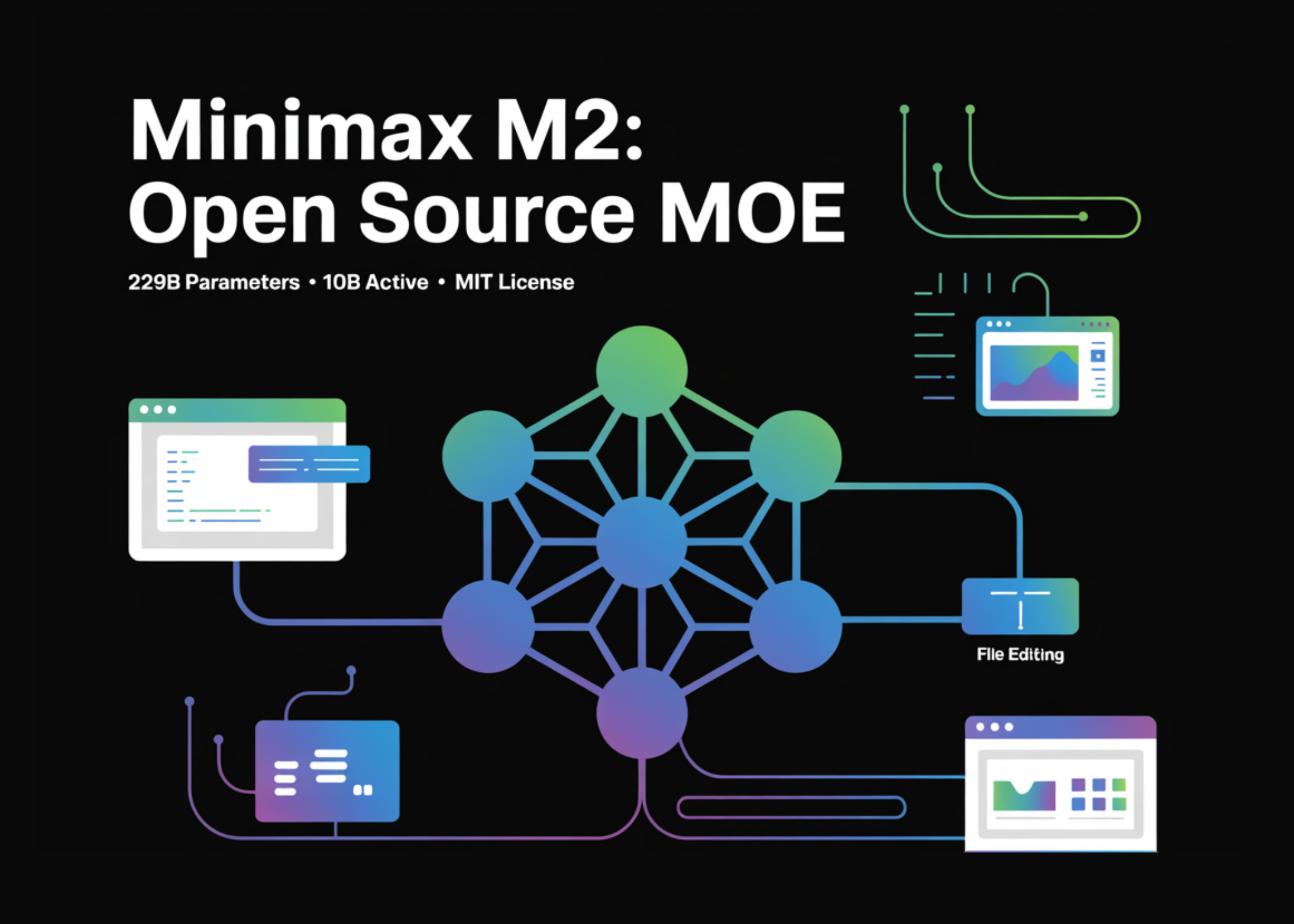

Chinese AI company MiniMax has released its M2.7 model under an open-source license. The announcement, made via a post on X, claims the model achieves state-of-the-art performance on two coding benchmarks: SWE-Pro (56.22%) and Terminal Bench 2 (57.0%). The model is now available on Hugging Face.

What Happened

On April 18, 2026, MiniMax announced the open-source release of its M2.7 model. The company provided two key benchmark scores to substantiate its "state-of-the-art" claim:

- SWE-Pro: 56.22%

- Terminal Bench 2: 57.0%

The model weights and associated code are hosted on the Hugging Face platform. The company also linked to a blog post with further details and its commercial API offering.

Context

MiniMax is a prominent Chinese AI startup known for its text-to-video models and large language models. The release of M2.7 follows a pattern of increasing activity from the company in the open-source LLM space, positioning itself against other major players.

SWE-Pro (Software Engineering Professional) and Terminal Bench 2 are benchmarks designed to evaluate a model's ability to perform complex, real-world software engineering tasks, often involving code generation, debugging, and terminal command execution.

Technical Details & Availability

The model is available for download from its Hugging Face repository. The licensing terms, specific architecture details (e.g., parameter count, training data, and methodology), and a full evaluation suite are expected to be detailed in the accompanying blog post. The commercial MiniMax API provides an alternative, managed endpoint for the same model.

gentic.news Analysis

This move by MiniMax is a direct shot across the bow in the intensifying open-source coding model wars. By releasing M2.7, MiniMax is not just contributing to the community; it's strategically positioning its technology as a public benchmark for performance. The claimed scores on SWE-Pro (56.22%) and Terminal Bench 2 (57.0%) are significant, but the lack of immediate, detailed comparisons to other top models like DeepSeek-Coder, Codestral, or CodeLlama makes the "SOTA" claim provisional until independent verification.

This release aligns with a broader trend we've covered, where AI labs are using open-source releases for strategic validation. It follows MiniMax's previous launch of its text-to-video model and increased fundraising activity, indicating a multi-front push to establish technical credibility. The timing is also notable, coming amidst heated competition in the developer tools AI space. If the benchmarks hold, M2.7 could become a serious contender for integration into IDEs and CI/CD pipelines, challenging incumbents. The critical next step will be seeing how these scores translate to real-world developer productivity and how they compare in a full, public benchmark suite against the established leaders.

Frequently Asked Questions

What is MiniMax M2.7?

MiniMax M2.7 is a large language model for code, recently released as open-source by the Chinese AI company MiniMax. The company claims it achieves state-of-the-art performance on the SWE-Pro and Terminal Bench 2 coding benchmarks.

How does MiniMax M2.7's performance compare to other coding models?

Based solely on MiniMax's announcement, M2.7 scores 56.22% on SWE-Pro and 57.0% on Terminal Bench 2. These numbers are claimed to be state-of-the-art, but direct, published comparisons to models like DeepSeek-Coder-V2, Codestral, or CodeLlama 70B are not yet available in the brief announcement. Independent benchmarking will be required for a definitive ranking.

Is MiniMax M2.7 free to use?

Yes, the model has been released under an open-source license and is available for download from Hugging Face. The specific license terms (e.g., Apache 2.0, MIT, or a custom license) should be verified on the model's repository. MiniMax also offers the model via a commercial API.

What are SWE-Pro and Terminal Bench 2?

SWE-Pro (Software Engineering Professional) and Terminal Bench 2 are benchmarks that evaluate a model's proficiency at real-world software engineering tasks. They typically involve solving GitHub issues, writing code from descriptions, executing terminal commands, and debugging—tasks that mimic a professional developer's workflow better than simpler code completion tests.