A developer has demonstrated a practical application of an open-source frontier language model, using MiniMax's M2.7 to generate a complete, playable video game from a single text prompt.

Key Takeaways

- A developer used the open-source MiniMax M2.7 frontier model to generate a complete, playable desktop game from a text prompt.

- This demonstrates practical code generation for creative applications.

What Happened

On April 23, 2026, the AI company MiniMax shared a post on X showcasing a project by AtomicBot AI. The developer used the open-source MiniMax M2.7 model to turn a simple prompt into a fully functional game called "Flappy Lobster"—a clone of the popular mobile game Flappy Bird with a crustacean theme.

The process, as described by MiniMax, involved the model:

- Writing the code for the game based on the prompt.

- Saving the necessary files (code, assets) to a local directory.

- Producing a runnable game on the user's desktop.

MiniMax's commentary framed this as the ideal use case for open-source frontier models: putting powerful AI directly "in the hands of builders" for rapid prototyping and creative experimentation.

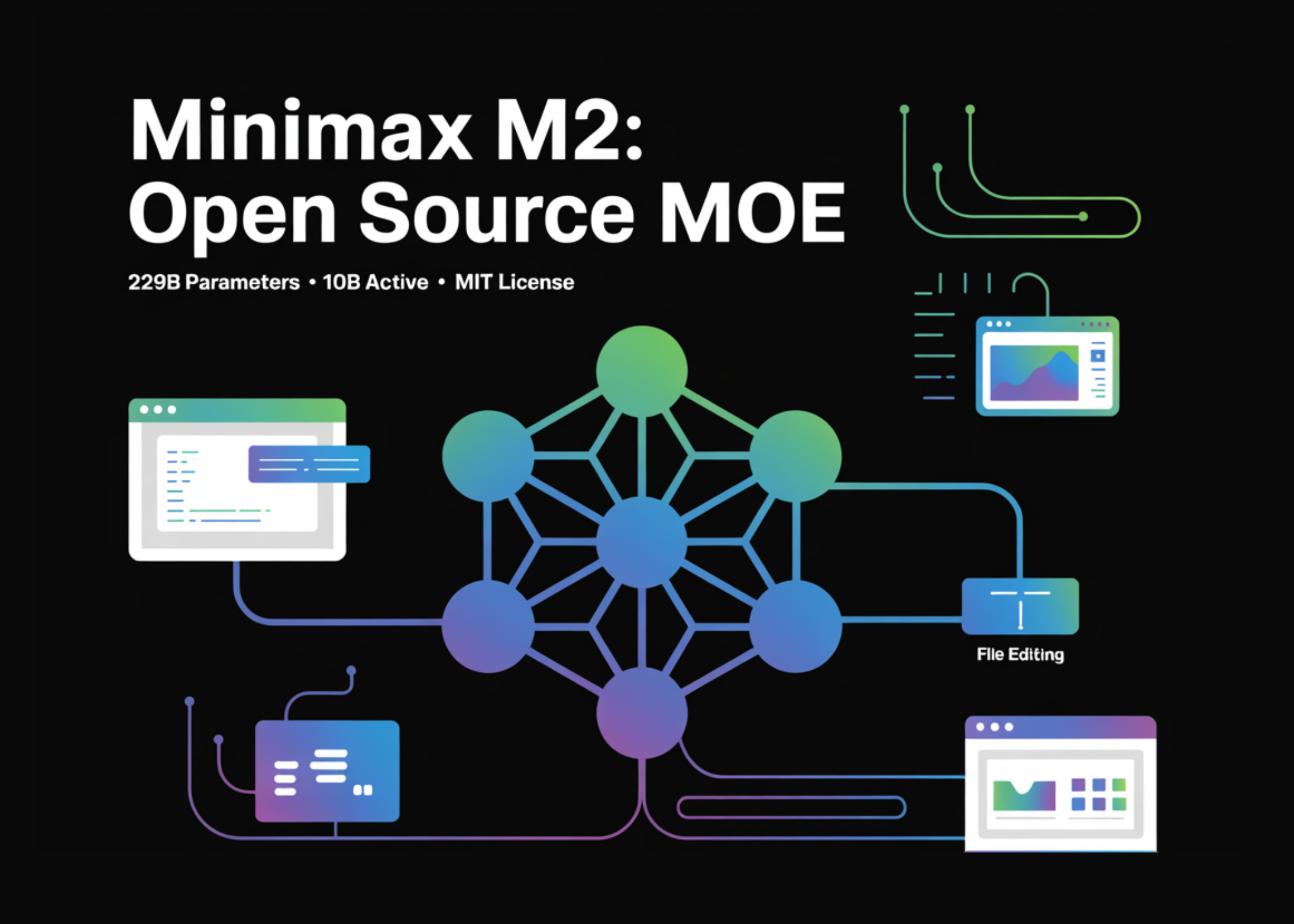

Context: The MiniMax M2.7 Model

The MiniMax M2.7 is a large language model released by the Chinese AI firm MiniMax. Positioned as a "frontier model," it is part of a competitive wave of open-source models designed to rival the capabilities of closed offerings from companies like OpenAI and Anthropic. Its release in late 2025 was notable for its strong performance on coding and reasoning benchmarks, making it a candidate for agentic and code-generation tasks.

This demonstration by AtomicBot AI is a tangible example of the model's advertised capabilities moving from benchmark scores to a functional, end-to-end creative tool.

What This Means in Practice

For developers and creators, this use case highlights a shift from AI as a coding assistant (e.g., GitHub Copilot) to an autonomous prototyping engine. The model handled the entire pipeline from concept to executable, which includes non-trivial steps like structuring the project, writing coherent game logic, and likely generating basic asset placeholders.

The "Flappy Lobster" example, while simple, validates the model's ability to understand a genre specification ("Flappy Bird"), apply a thematic twist ("Lobster"), and produce a cohesive software artifact. This reduces the initial friction for game jams, educational projects, or proof-of-concept demos.

gentic.news Analysis

This demonstration is a strategic data point in the ongoing open-vs-closed AI model war. MiniMax, a company that has aggressively positioned its models against giants like OpenAI's o1 series and Google's Gemini, gains validation not from a lab benchmark, but from a third-party builder creating a shareable product. This aligns with a trend we noted in our coverage of the M2.7 release, where the emphasis was on its "practical reasoning" and agentic potential over pure knowledge-test performance.

The choice of game development as the demo domain is savvy. Game code requires a blend of logic, physics simulation, asset management, and real-time interaction—a more complex integration test than generating a standalone algorithm or API wrapper. A successful demo here suggests robustness for other multi-file, multi-step software generation tasks.

However, key questions for practitioners remain unanswered by this showcase. The post does not detail the exact prompt used, the level of human intervention required to fix errors or refine output, the complexity ceiling for such game generation, or the model's performance on less derivative, more original game concepts. The next test for models like M2.7 will be moving from cloning known game mechanics to interpreting novel mechanic descriptions.

Frequently Asked Questions

What is the MiniMax M2.7 model?

The MiniMax M2.7 is a large language model developed by the Chinese AI company MiniMax and released as open-source in late 2025. It is a "frontier model" designed to be competitive with top-tier models from OpenAI and Anthropic, with particular strengths in coding, reasoning, and agentic tasks.

Can I use MiniMax M2.7 to generate my own games?

Yes, in principle. As an open-source model, M2.7 can be downloaded and run locally or via cloud services. However, successfully replicating a demo like "Flappy Lobster" requires technical setup, a suitable prompting interface, and likely some software engineering knowledge to configure the environment and debug any issues in the generated code.

How does this compare to other AI code generators?

This demonstration goes beyond typical AI pair programmers like GitHub Copilot, which assist within existing files. M2.7 here acted as an autonomous agent, handling the full project lifecycle from a blank slate. It is more comparable to efforts like OpenAI's o1 or Devin from Cognition AI, which aim to complete entire software tasks from a single instruction. The open-source nature of M2.7 makes this capability more accessible for integration into custom workflows.

What are the limitations of AI game generation shown here?

The demo shows a clone of an extremely simple, well-understood game genre. Major limitations not addressed include: generating original game mechanics, creating complex art assets (not just placeholders), balancing game difficulty, writing compelling narrative, and optimizing performance. Current models are best at recreating known templates and prototypes, not at full, production-ready, original game development.