What Happened

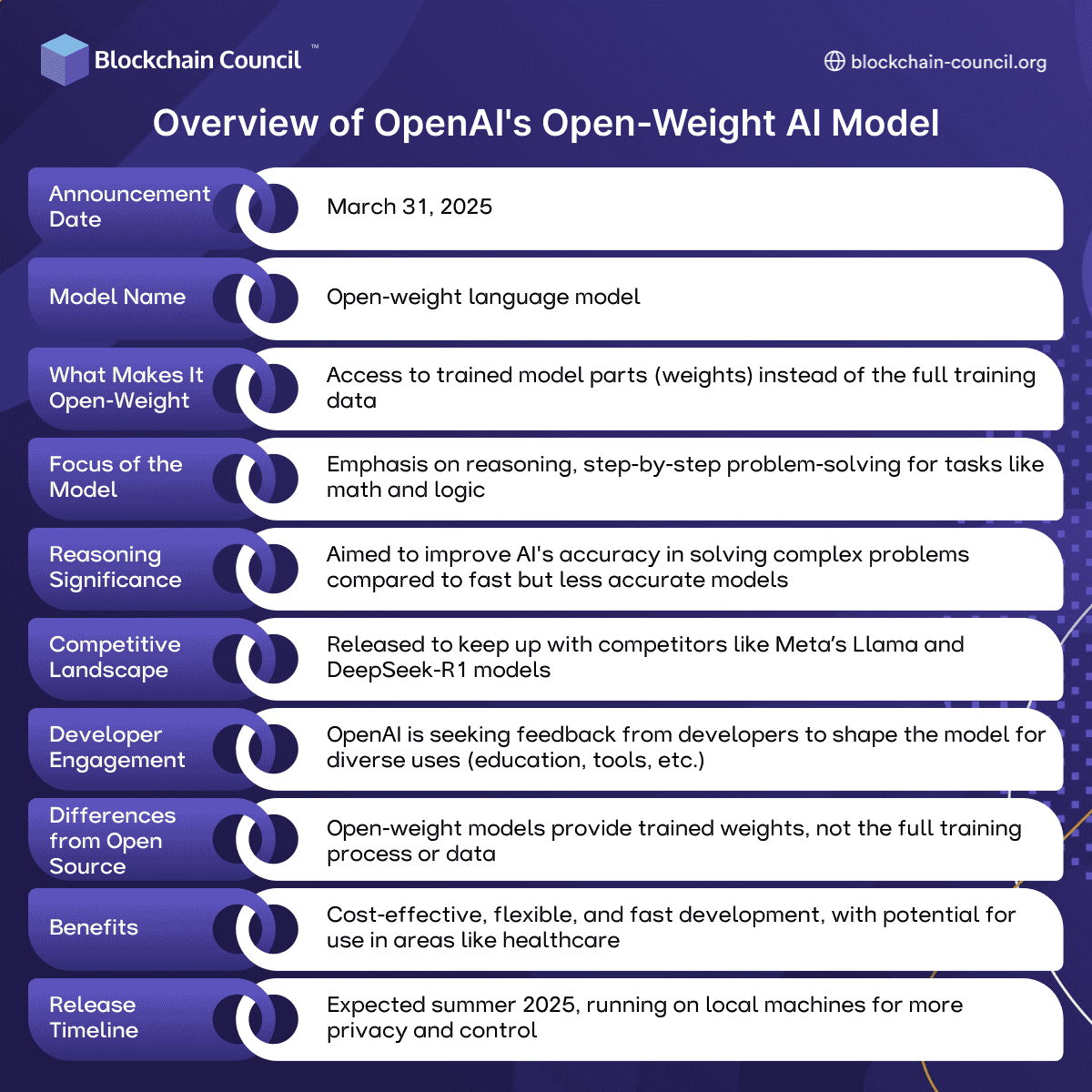

According to a post by user @kimmonismus on X, OpenAI is developing an "AI intern" with a target deployment date of September. The company's broader roadmap reportedly aims for a "full system" by 2028. The post states the project is "powered by advances in reasoning models and agent systems like Codex."

The source claims these existing tools "already show dramatic productivity gains, solving problems in days instead of weeks," but acknowledges they "still face reliability and safety challenges." The final line of the post indicates OpenAI is "on this road to autonomous researchers."

Context

The concept of an "AI intern" or research assistant aligns with ongoing industry efforts to automate parts of the software development and research lifecycle. Agent systems, which can break down complex tasks, execute code, and iterate on solutions, have been a focus for OpenAI and others. The mention of Codex—the model family that powers GitHub Copilot—suggests the initiative may build upon code generation and understanding capabilities.

The stated 2028 target for a full system implies a multi-year development timeline, moving from an initial assistive tool (the "intern") toward a more capable, autonomous research agent. The explicit note about reliability and safety challenges reflects known limitations in current AI agent systems, which can produce incorrect outputs or execute unsafe actions without robust oversight.

No official announcement, technical details, benchmarks, or specific model names beyond Codex are provided in the source material.