If you're using Claude Code for web automation tasks, you've probably experienced the latency of browser interactions through MCP servers. The standard @playwright/mcp server adds 100-200ms per action, which compounds to 20+ seconds of pure waiting during typical automation flows. Pilot MCP solves this with a 41% performance improvement and 6x reduction in context usage.

What It Does — Architecture That Eliminates Latency

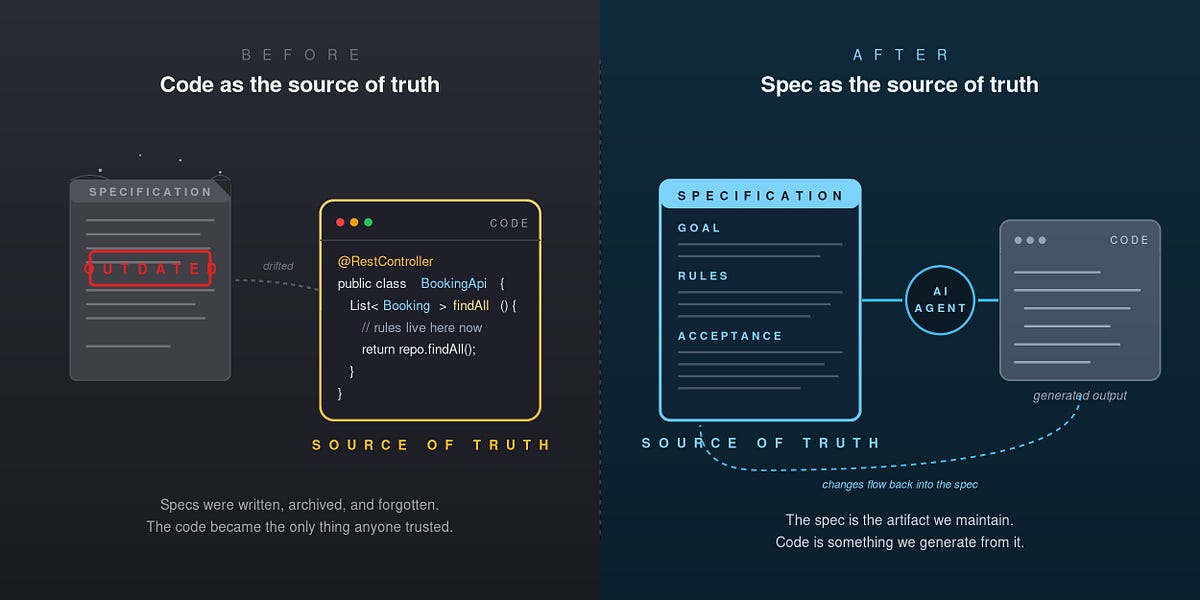

Most MCP browser servers run Playwright as a separate process communicating over HTTP or WebSocket. Every click, navigation, or form fill pays for serialization, network round-trip, and deserialization overhead.

Pilot takes a simpler approach: it runs Playwright in the same Node.js process as the MCP server. No HTTP layer means ~5ms per action instead of ~100-200ms. This architectural shift is what delivers the 41% wall time improvement documented in benchmarks using Claude Code as the runtime.

The Context Size Problem — Solved

Here's where Pilot really shines for Claude Code users: context management. The standard Playwright MCP dumps a full page snapshot on every navigate() call — typically 58K characters. Pilot returns only ~1K characters by default (the top 20 interactive elements).

On a 2-page task, that's 116K characters vs 11K entering Claude's context window — 6x less data before the model generates its first token. This directly translates to faster responses and lower token costs.

Tool Profiles — Right-Sizing for Your Agent

Pilot ships with 58 tools, but research shows LLM performance degrades past ~30 tools. Pilot solves this with configurable profiles:

core(9 tools) — navigate, snapshot, click, fill, type, press_key, wait, screenshot, snapshot_diffstandard(28 tools) — adds tabs, scroll, hover, drag, iframes, page readingfull(58 tools) — everything

Set PILOT_PROFILE=standard and Claude only sees 28 tools, improving its tool selection accuracy.

Features That Actually Matter for Daily Use

Cookie Import: Pilot decrypts cookies directly from Chrome, Arc, or Brave via macOS Keychain. Your Claude Code agent is immediately logged into whatever you use daily — no manual authentication flows.

Handoff/Resume: Hit a CAPTCHA? pilot_handoff opens a visible Chrome window with your full session. Solve it manually, then pilot_resume picks up where you left off.

Iframe Support: While @playwright/mcp has iframe support marked NOT_PLANNED, Pilot has full list/switch/interact support.

Setup — 2-Minute Drop-In Replacement

npx pilot-mcp

npx playwright install chromium

Add to your .mcp.json:

{

"mcpServers": {

"pilot": {

"command": "npx",

"args": ["-y", "pilot-mcp"]

}

}

}

That's it. Pilot uses the same MCP interface as @playwright/mcp, so all your existing Claude Code prompts and workflows continue working — just faster.

Benchmarks That Matter

From the developer's tests with Claude Code:

- Wall time: 25s vs 43s (41% faster)

- Tool result size: 5,230 chars vs 9,165 chars (43% smaller)

- Cost/task: $0.107 vs $0.124 (13% cheaper)

- Success rate: 5/5 vs 4/5

When To Use It

Replace @playwright/mcp with Pilot when:

- You're automating multi-step web workflows with Claude Code

- You need faster iteration during development

- You want Claude to spend less time waiting and more time reasoning

- You're hitting context limits with large page snapshots

- You need seamless authentication with your daily browser cookies

GitHub: github.com/TacosyHorchata/Pilot | npm: pilot-mcp | Version: 0.3.0 | License: MIT