Key Takeaways

- An article from Towards AI details six production-ready patterns for creating Claude AI agents that adhere to business rules.

- This addresses the core enterprise challenge of making LLMs predictable and compliant, moving beyond prototypes to reliable systems.

What Happened

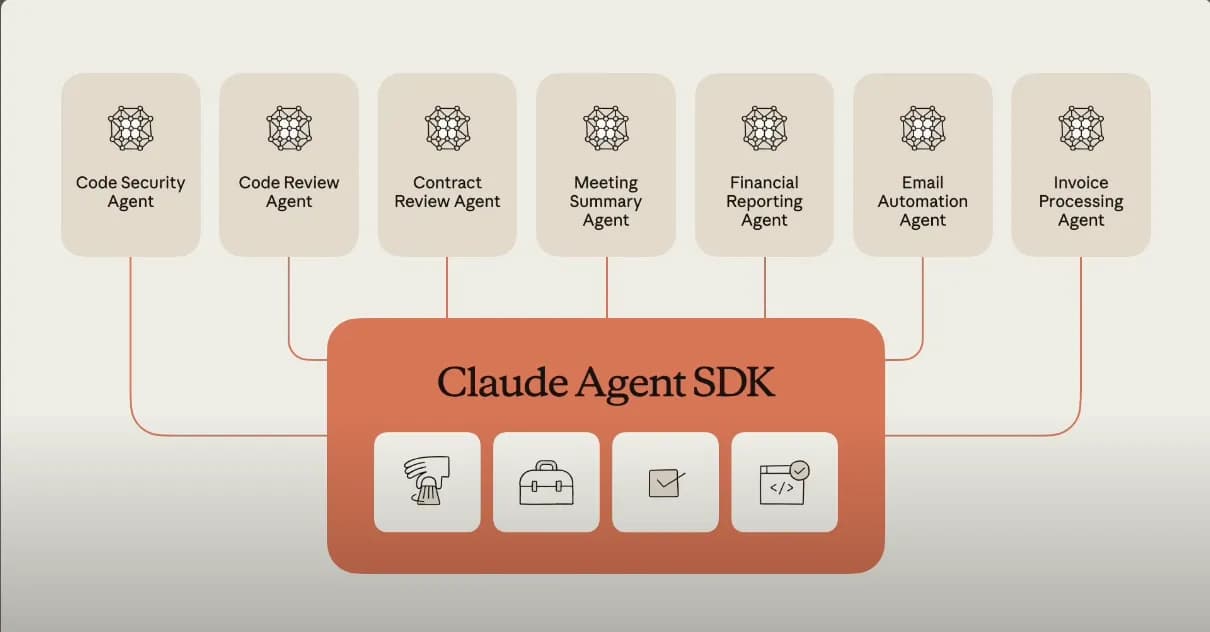

A new technical guide, published on Towards AI, outlines six specific architectural patterns for building "Claude Certified Architect"-ready agents. The central thesis is that while large language models like Claude are powerful, they are inherently unpredictable. To deploy them in business-critical production environments—where cost, brand safety, and regulatory compliance are non-negotiable—developers must architect systems that enforce business rule compliance. The article promises a move beyond simple prompt engineering to structured, repeatable patterns that make LLMs "actually obey" the rules.

Technical Details: The Six Patterns

The source material positions these patterns as essential for effective LLM governance. While the full article is behind a paywall, the summary and context suggest the patterns likely revolve around constraining and guiding the agent's behavior through system design rather than hoping the base model gets it right. Common patterns in this domain typically include:

- Guardrail & Validation Layers: Implementing a separate system that checks the agent's outputs or planned actions against a predefined rulebook before execution or delivery.

- Orchestrated Multi-Agent Workflows: Decomposing a complex task into smaller steps, each handled by a specialized agent with a narrow, rule-bound mandate, with a supervisor agent managing the flow.

- Explicit State Machines: Designing the agent interaction as a finite-state machine, where the LLM's role is to navigate between predefined states based on business logic, not to invent the process.

- Constrained Action Spaces: Instead of giving the agent free-form text generation for actions, presenting it with a strict, structured list of possible actions (API calls, database queries) it can take, drastically reducing hallucination.

- Retrieval-Augmented Governance (RAG for Rules): Dynamically providing the agent with the exact business rules, policy documents, or compliance guidelines relevant to the current query from a verified source, ensuring its responses are grounded in authority.

- Human-in-the-Loop Checkpoints: Building mandatory approval steps into automated workflows for high-stakes decisions (e.g., discount approvals, sensitive customer communications).

The goal of these patterns is to shift reliability from the model's weights to the system's architecture, creating a "Claude Certified" agent that an organization can trust.

Retail & Luxury Implications

For retail and luxury, where brand voice, pricing integrity, customer privacy, and complex promotion logic are paramount, these patterns are not just technical exercises—they are the prerequisite for any serious AI deployment.

- Personal Shopping Assistants: An agent using Patterns 1 & 4 can provide styling advice while strictly adhering to brand guidelines (never suggesting mismatched logos) and inventory rules (only showing in-stock items). It can execute actions like "add to cart" or "check store availability" without inventing false options.

- Dynamic Pricing & Promotion Engines: An agent governed by Patterns 5 & 6 can recommend pricing adjustments or generate promotional copy, but its decisions are constrained by a retrieved rule set (e.g., "never discount heritage items below X%") and may require manager approval for deviations beyond a threshold.

- Customer Service Resolution: A multi-agent workflow (Pattern 2) could handle a return request: Agent A retrieves the customer's purchase history and policy, Agent B validates eligibility, and Agent C generates the compliant response and initiates the logistics ticket—all without violating GDPR or making unauthorized promises.

- Content Generation for Marketing: An agent using Patterns 1 & 5 can draft product descriptions or email campaigns in the brand's exact tonal voice, ensuring all claims are substantiated by retrieved product specs and compliance documents.

The gap between a generic LLM and a production agent is the business rule layer. These patterns provide a blueprint for building that layer, transforming Claude from a creative writer into a governed employee.

Implementation Approach

Implementing these patterns requires a shift from pure AI engineering to software and systems engineering with AI components.

- Rule Formalization: The first and most critical step is translating implicit business knowledge ("we don't discount classic bags") into explicit, machine-readable rules. This often involves close collaboration with legal, marketing, and merchandising teams.

- Architecture Selection: Choose the pattern(s) based on the use case's risk profile and complexity. A high-volume, low-risk task like tagging products might use a simple guardrail. A high-value customer interaction might require a full state machine with human checkpoints.

- Tooling Integration: Claude Agents and tools like Claude Code (which, as our KG data shows, has seen intense weekly activity with 70 mentions) are designed for this. Claude Code's direct shell and filesystem access, especially with its recent Model Context Protocol (MCP) integrations, allows agents to interact with real business systems (ERPs, CRMs, PIMs) within defined boundaries.

- Testing & Validation: Develop rigorous test suites that validate the agent against edge-case business rules. This is where the "certified" aspect comes in—the system must be auditable.

Governance & Risk Assessment

Maturity Level: High. The concepts of guardrails, constrained actions, and human-in-the-loop are well-established in enterprise software. Applying them to LLM agents is a logical and necessary evolution.

Primary Risks & Mitigations:

- Rule Gaps: An incomplete rule set is the biggest risk. Mitigate with exhaustive discovery processes and designing agents to default to a "safe state" (e.g., escalate to human) when rules are unclear.

- System Complexity: Over-engineering can make the agent brittle. Start with the simplest pattern that manages the key risk.

- Latency & Cost: Adding validation layers and multi-agent coordination increases API calls and latency. This must be balanced against the cost of compliance failures.

- Vendor Dependence: Building on Anthropic's stack (Claude, Claude Agent, Claude Code) creates lock-in. However, the architectural patterns themselves are vendor-agnostic and can be adapted to other model providers.

gentic.news Analysis

This focus on production-ready, rule-compliant agents is a direct response to the market's maturation. As our Knowledge Graph shows, Claude Code has been a trending entity, appearing in 70 articles this week alone, with the community rapidly sharing best practices (evidenced by the open-source 'claude-code-best-practice' repo hitting 19.7K stars). The industry is moving past fascination with raw capability to the hard work of integration and control.

The timing is significant. This follows Anthropic's recent milestone of hitting a $3.4B run rate, as we covered on April 13th. To serve enterprise clients at that scale—especially in regulated industries like luxury retail—Anthropic must provide not just powerful models but the frameworks to tame them. The "Claude Certified Architect" concept is a strategic move to build trust and a moat around their ecosystem.

Furthermore, this aligns with a trend we've observed in our coverage: the rise of specialized tools for managing AI workflows. For instance, our April 13th article on "AgentsView 0.22" highlighted a tool built for monitoring and optimizing Claude agent usage at scale. The pattern is clear: the market is layering observability, governance, and efficiency tools on top of the core AI models, which is exactly what these six architectural patterns enable.

For retail AI leaders, the message is to invest in AI systems engineering. The competitive advantage will not come from having access to Claude Opus 4.6 or GPT-5, but from how expertly you can architect those models to embody your unique business rules and brand ethos. The patterns outlined here are the foundational curriculum for that discipline.