Tencent's Hunyuan AI lab has announced the open-source release of HY-World 2.0, a multimodal world model designed for generating, reconstructing, and simulating interactive 3D environments. The announcement was made via the lab's official social media account and shared by AI researcher Rohan Paul.

Key Takeaways

- Tencent's Hunyuan AI lab has open-sourced HY-World 2.0, a multimodal world model capable of generating, reconstructing, and simulating interactive 3D scenes.

- This release provides a significant, freely available tool for 3D content creation and embodied AI research.

What Happened

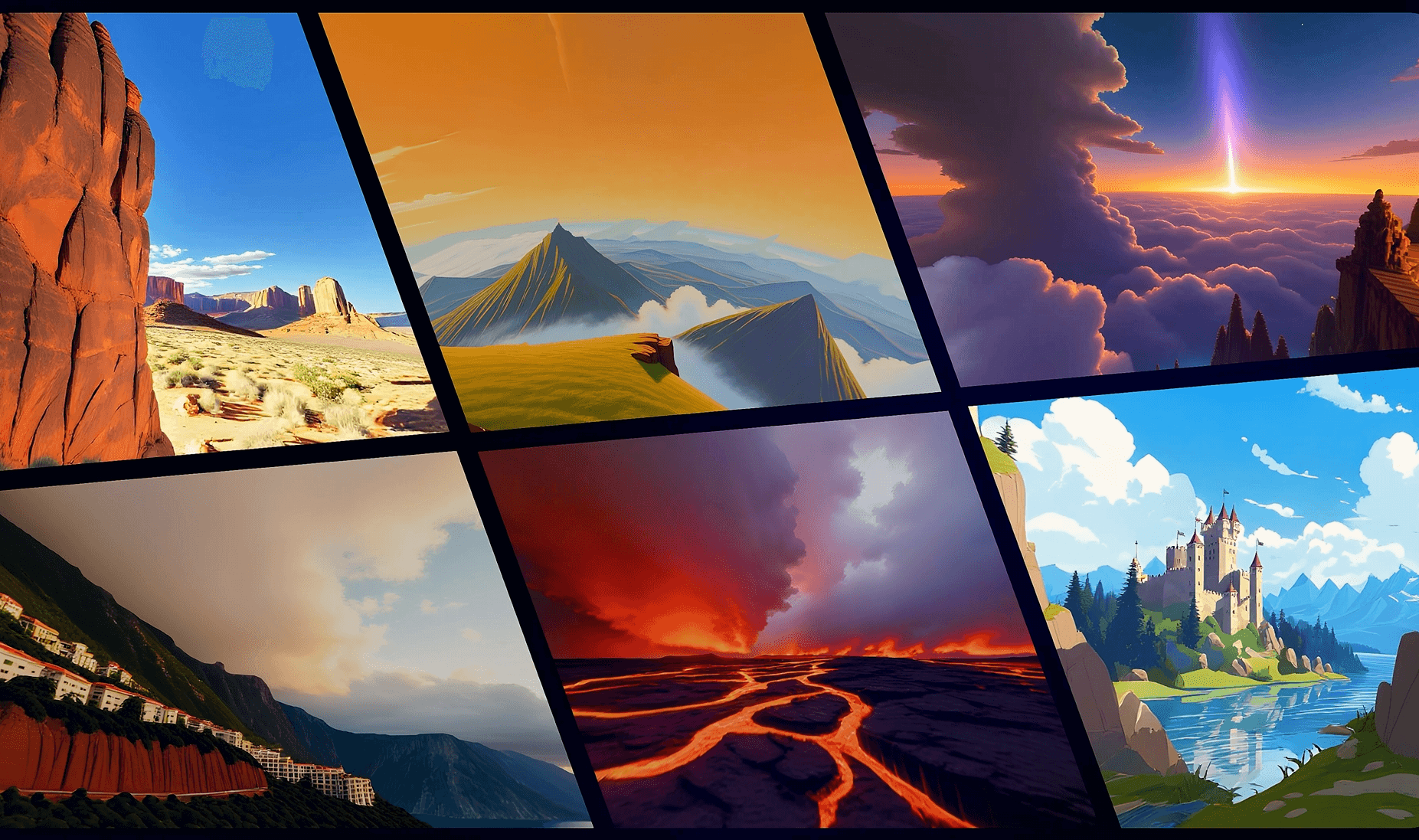

HY-World 2.0 is a model that operates on multimodal inputs—likely combining text, images, and potentially other data—to create and manipulate 3D worlds. The core capabilities highlighted are:

- Generation: Creating 3D scenes from prompts or other inputs.

- Reconstruction: Building 3D representations from existing data (e.g., 2D images or videos).

- Simulation: Enabling interaction within the generated 3D environments, a key feature for training embodied AI agents.

The term "world model" is significant, as it refers to AI systems that learn an internal representation of an environment, allowing for prediction and planning—a crucial component for advanced robotics and autonomous agents.

Context

This release follows a broader industry trend of major AI labs open-sourcing foundational models, particularly in the 3D and multimodal space. Tencent's Hunyuan lab is the company's primary AI research division, known for its large language model series and previous multimodal work. Open-sourcing a model of this complexity provides researchers and developers with a powerful tool for 3D content creation, game development, virtual reality, and training the next generation of embodied AI without the prohibitive cost of training such a model from scratch.

Making the model open-source lowers the barrier to entry for academic labs and smaller companies working on 3D AI problems. It allows the community to build upon Tencent's work, fine-tune the model for specific applications, and audit its capabilities and limitations.

gentic.news Analysis

Tencent's move to open-source HY-World 2.0 is a strategic play in the increasingly competitive 3D AI foundation model race. It follows the pattern set by other labs like Meta with its 3D generative models, but focuses specifically on the "world model" aspect critical for simulation and embodied AI. This is not just a 3D asset generator; its emphasis on simulation and interactivity positions it as a potential engine for training robotic control policies or NPC behavior in complex virtual environments.

The release aligns with a trend we've noted where major tech players are open-sourcing complex models to establish ecosystem influence and accelerate research in capital-intensive fields. By providing HY-World 2.0 to the community, Tencent is effectively crowdsourcing the exploration of its model's applications and limitations, while simultaneously building developer mindshare around its Hunyuan AI platform. The success of this strategy will depend on the model's actual performance, ease of use, and documentation—details not provided in the initial announcement. Practitioners should watch for the release of benchmarks, comparison to alternatives like Nvidia's GET3D or Google's DreamFusion derivatives, and the specific license terms, which will determine its commercial viability.

Frequently Asked Questions

What is a "world model" in AI?

A world model is an AI system that learns a compressed spatial and temporal representation of an environment. It allows an agent to predict future states or outcomes based on its actions without needing to interact with the real world at every step. This is a foundational concept for developing capable, sample-efficient reinforcement learning agents and is a key research area for robotics and general AI.

What can HY-World 2.0 be used for?

Potential applications include rapid prototyping of 3D scenes for games and virtual reality, creating synthetic training environments for robotics and autonomous vehicle AI, architectural visualization, and as a research testbed for developing and benchmarking new algorithms in 3D reconstruction, generation, and embodied AI.

How does this compare to other open-source 3D AI models?

While several open-source models generate 3D objects from text or images (e.g., Shap-E, OpenLRM), HY-World 2.0 appears to be distinguished by its focus on complete, interactive environments (worlds) rather than single objects. Its direct competitors would be other large-scale 3D scene generators and simulators, many of which are still proprietary or research-only. The quality of its outputs, its scale, and its interactivity features will determine its standing once the model is publicly available for testing.

Is HY-World 2.0 available now?

The announcement states the model is being open-sourced. The immediate next step is for Tencent to publish the model weights, code, and documentation on a platform like GitHub or Hugging Face. Developers should monitor Tencent Hunyuan's official channels for the actual release repository and installation instructions.