What Happened: The Shift from AI Toys to Production Agents

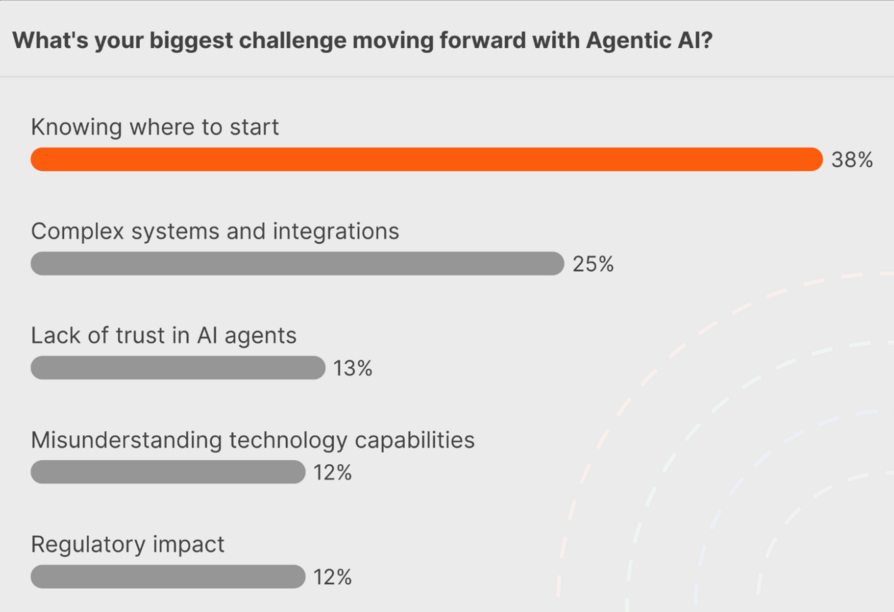

The article presents a critical perspective on the current state of enterprise AI adoption. While most executives claim they are investing enough in AI, few can point to specific workflows that have been materially improved by AI agents. The author argues that the gap isn't a technological one—it's not about missing foundation models or vector databases—but rather a missing operating model.

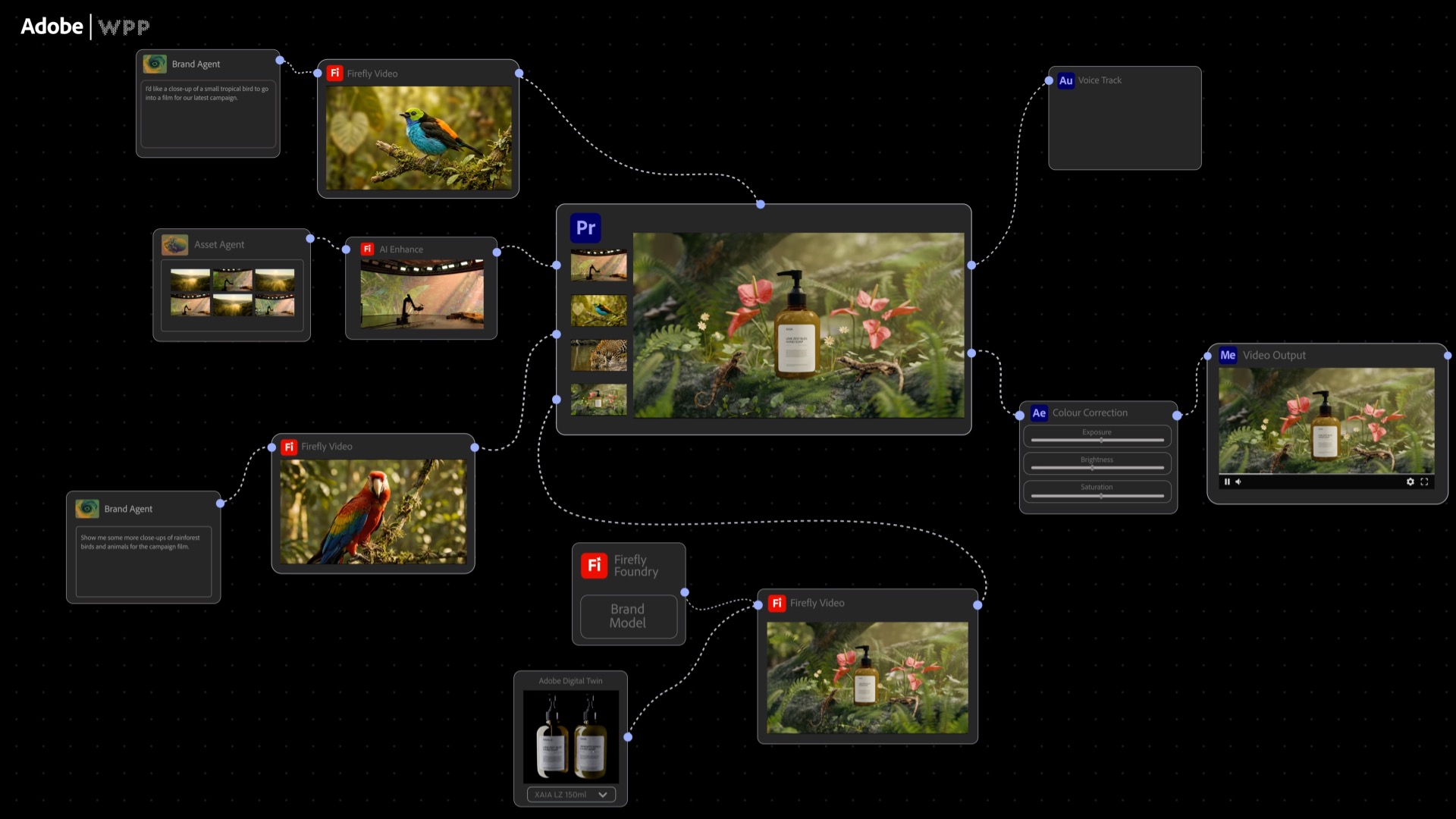

By 2026, the enterprises seeing real ROI from generative AI won't just be deploying models; they'll be deploying agents: autonomous, tool-using AI systems. When done right, agentic AI resembles a well-run team more than magic software: each agent has a clear job, a supervisor, reliable tools, and a mechanism for improvement.

The core thesis is that organizations need to stop asking "Where can we use an agent?" and instead identify workflows already structured like jobs an agent could do. This requires satisfying the Four Pillars of Agentic Readiness.

The Four Pillars of Agentic Readiness

Pillar 1: Clear Start, End, and Purpose

An agent needs unambiguous triggers, a defined completion state, and the ability to handle reasonable edge cases without failure. The example given is an automated invoice processor: the trigger is an email arriving in S3, the purpose is to extract data and match it to a purchase order, and the end state is submission to an ERP system or flagging for human review. If a human team cannot articulate what "done well" looks like, the workflow isn't ready for an agent.

Pillar 2: Access to Reliable Tools and Data

Agents must have access to the same reliable tools and data sources that human employees use to complete the task. This includes APIs, databases, and internal systems. The infrastructure must support secure, governed access. On AWS, this involves services like Amazon Bedrock for foundation models, Knowledge Bases for enterprise data, and Lambda functions for custom logic.

Pillar 3: Defined Guardrails and Escalation Paths

Production agents require safety mechanisms. This includes:

- Input/Output Validation: Checking for harmful content, data leaks, or policy violations.

- Confidence Thresholds: Knowing when to escalate to a human (e.g., if confidence in an extracted invoice amount is below 95%).

- Supervisory Oversight: A human-in-the-loop or a supervisory agent to review critical decisions or handle exceptions.

Pillar 4: Measurable Outcomes and Feedback Loops

You cannot improve what you cannot measure. Each agent deployment must have defined KPIs (e.g., processing time, accuracy rate, cost per transaction) and a built-in feedback mechanism. This allows for continuous refinement of the agent's instructions, tools, and underlying models.

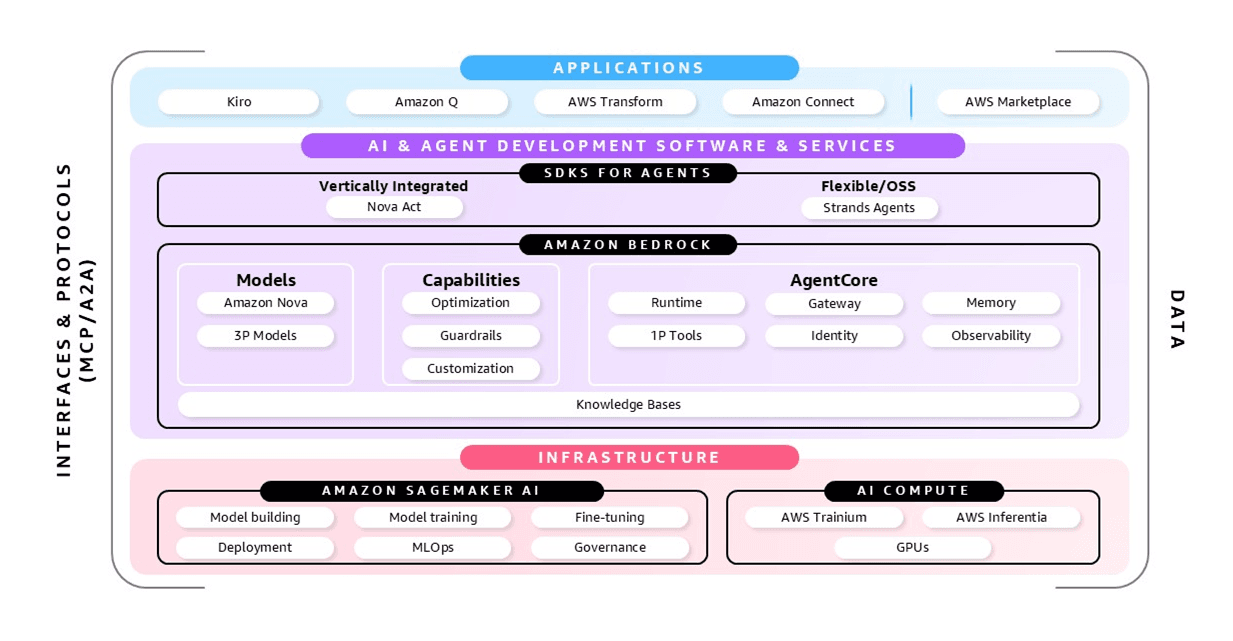

Technical Details: The AWS Stack for Agentic AI

The guide focuses on Amazon Bedrock AgentCore as the foundational service. Bedrock provides managed access to leading foundation models (like those from Anthropic, which Amazon has invested in) and the tools to build, orchestrate, and monitor agents.

Key components of the operational stack include:

- Orchestration: Using AWS Step Functions or custom orchestrators to manage multi-step agent workflows.

- Tool Integration: Connecting agents to internal systems via Lambda, API Gateway, and private APIs.

- Observability: Implementing comprehensive logging with CloudWatch, tracing with X-Ray, and monitoring agent decisions and costs.

- Security & Governance: Applying IAM roles, VPC endpoints, and data loss prevention policies tailored for autonomous AI systems.

The article implicitly references a maturing landscape. Recent events, such as Amazon mandating senior engineer sign-off for all AI-assisted production code changes after outages linked to AI coding tools, underscore the growing emphasis on operational rigor and safety in AI deployments.

Retail & Luxury Implications

The framework described is directly applicable to retail and luxury, where numerous structured, repetitive workflows exist. The shift from asking "Where can we use AI?" to identifying "agent-ready jobs" is crucial for moving beyond chatbots and image generators.

Concrete Agent-Ready Workflows in Retail:

- Automated Customer Service Escalation: An agent monitors incoming support tickets. Using clear triggers (e.g., a ticket containing the word "refund" and a value over €500), it gathers order history, policy documents, and previous communications, then either provides a resolution or prepares a comprehensive dossier for a human agent, clearly flagging the reason for escalation.

- Personalized Outreach Orchestrator: Triggered by a customer behavior (e.g., browsing a high-value item three times), an agent accesses the CRM, purchase history, and inventory data. It drafts a personalized email, checks it against brand tone guidelines, and schedules it for send—or escalates to a human relationship manager for high-net-worth clients.

- Supply Chain Exception Handler: When a logistics API reports a delay for a shipment containing limited-edition products, an agent is triggered. It identifies affected customers, checks their tier (e.g., VIC status), retrieves pre-approved messaging templates, and initiates proactive communication, all while logging the incident for supply chain analysis.

The Business Impact: Successfully operationalizing agents in these areas translates to measurable outcomes: reduced handle time for customer service, increased conversion through timely personalization, and enhanced brand trust through proactive communication. It moves AI from a cost center (experimentation) to a driver of efficiency and customer experience.

Implementation Approach: For a luxury brand, the journey begins with a process audit. Identify a single, well-defined workflow that meets the four pillars. Start with a pilot using Bedrock, focusing intensely on Pillar 3 (Guardrails) to protect brand reputation and client data. Implement robust observability from day one to measure performance against business KPIs, not just technical metrics.

Governance & Risk Assessment: The need for guardrails is paramount. A poorly configured agent making autonomous decisions about client discounts or communications can cause significant brand damage. A governance model must be established, mirroring the approval chains for marketing or pricing decisions. The recent Amazon policy on senior engineer sign-offs for AI-assisted code is a precedent; similar oversight is needed for agent actions in customer-facing or financial workflows. Privacy (especially under GDPR for EU clients), bias in personalization, and the maturity of the underlying LLMs are critical risk factors that must be addressed in the design phase.