What It Does

The substack-mcp-plus server transforms Claude Code from a pure coding assistant into a market research tool. It exposes three new tools to your Claude Code session:

research_substack: Searches across Substack for writers and publications.research_substack_post: Fetches and analyzes the content of a specific post.research_substack_publication: Retrieves metadata and a list of posts for an entire publication.

This means you can now use Claude to, for example, research the competitive landscape for a new developer tool, analyze the writing style of successful technical blogs, or discover what topics are trending in a specific tech niche—all without leaving your terminal.

Setup

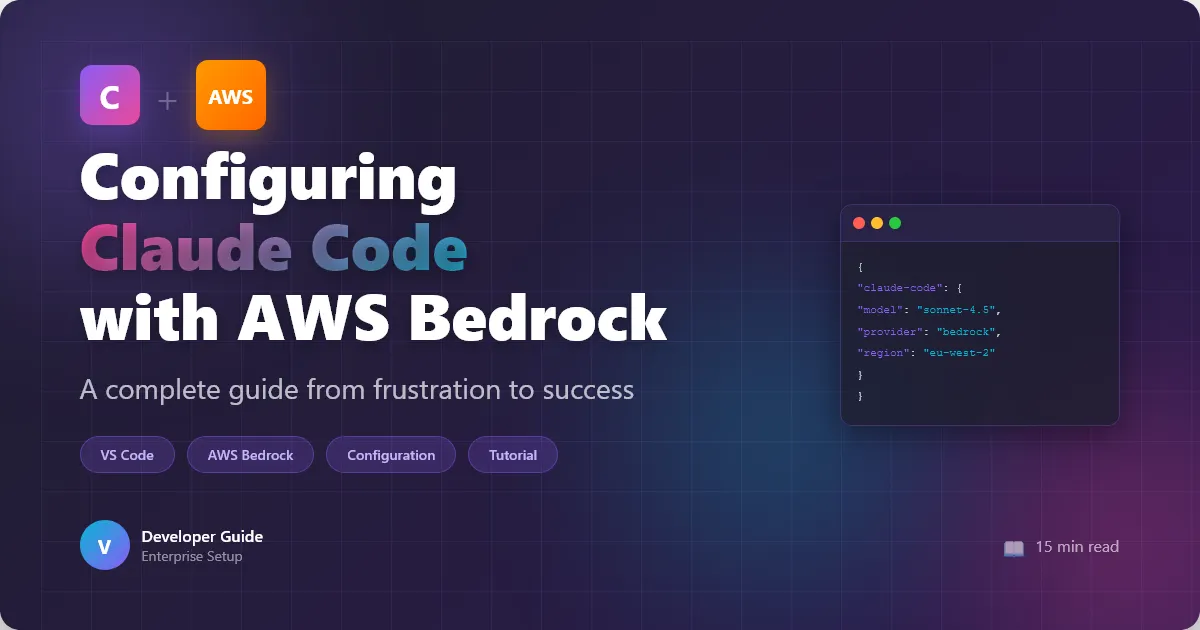

Installation is straightforward via Claude Code's MCP configuration. Add the server to your claude_desktop_config.json:

{

"mcpServers": {

"substack": {

"command": "npx",

"args": ["-y", "@substack-mcp-plus/server"]

}

}

}

After restarting Claude Code, the tools will be available. You can verify by starting a session and checking the attached tools list.

When To Use It

This server shines in the planning and validation phase of any content-driven or community-focused project. Here are concrete use cases for developers:

Validating a New Blog or Newsletter Idea: Before you write the first post for your new technical series, use

research_substack_publicationto analyze 3-5 leading publications in that space. Ask Claude: "What are the most common post structures? What topics get the most engagement (comments/likes)?"Competitive Analysis for DevTools: Building a new CLI tool? Use

research_substackto find writers discussing similar tools. Then, useresearch_substack_postto have Claude summarize their pain points and praised features. Prompt: "From these three posts, extract a list of the top 5 user complaints about existing deployment CLIs."Finding Collaboration Opportunities: Looking for technical co-authors or experts to interview? Use the search tool to discover active, high-quality writers in your stack (e.g., "Go" and "performance").

Example Prompt Flow:

/claude

I'm planning a Substack on advanced Rust patterns. First, use `research_substack` to find the top 3 publications about Rust programming. Then, use `research_substack_publication` on the first result. Give me a breakdown of their most common post categories and the average post length.

This follows a broader trend of MCP servers expanding beyond pure code execution into research and data gathering, a pattern we've seen with servers for GitHub and infrastructure-as-code tools.