What Happened

On March 29, 2026, a technical guide was published on Medium titled "When to Prompt, RAG, or Fine-Tune." The article addresses what the author calls a "basic but most people still get wrong in practice" decision: choosing the appropriate method for customizing large language models (LLMs) for specific use cases. This follows a pattern of Medium publishing practical implementation guides, with 10 articles referencing the platform appearing just this week.

The core challenge the article tackles is the strategic selection between three primary approaches:

- Prompt Engineering: Modifying the input prompt to guide model behavior

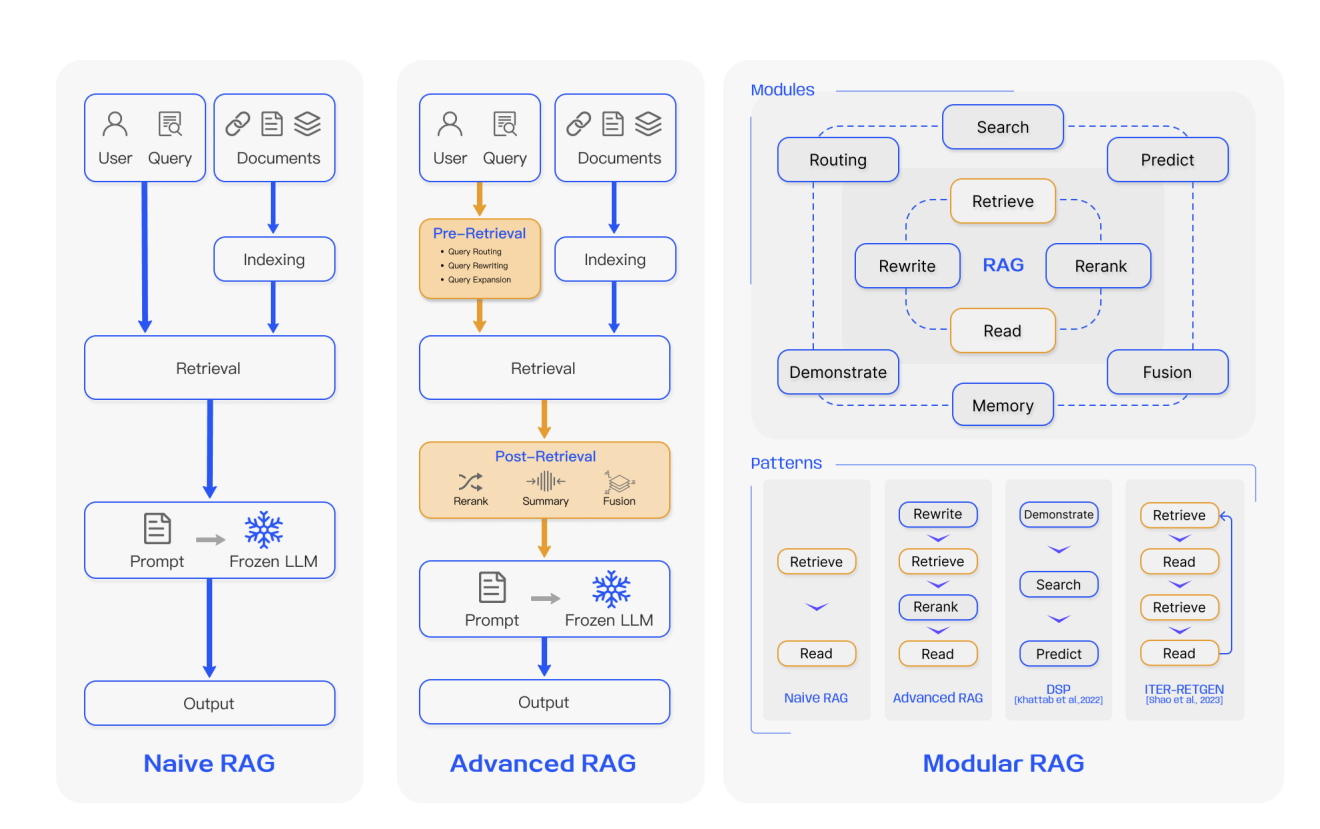

- Retrieval-Augmented Generation (RAG): Augmenting the model with external knowledge retrieval

- Fine-Tuning: Updating the model's weights on specific training data

Technical Details

While the full article is behind Medium's subscription paywall, the title and context suggest it provides a decision framework based on several key factors:

1. Knowledge Requirements

- Prompt Engineering: Suitable when the model already possesses the necessary knowledge but needs guidance on how to apply it

- RAG: Required when the model needs access to external, proprietary, or frequently updated information not present in its training data

- Fine-Tuning: Appropriate when the model needs to learn new patterns or behaviors that aren't easily elicited through prompting

2. Data Characteristics

- Volume: RAG typically requires less training data than fine-tuning

- Stability: Fine-tuning works best with stable knowledge domains, while RAG excels with dynamic information

- Specificity: Highly domain-specific tasks often benefit from fine-tuning, while broader knowledge tasks may use RAG

3. Implementation Complexity

- Prompt Engineering: Lowest complexity, immediate implementation

- RAG: Moderate complexity involving retrieval systems and vector databases

- Fine-Tuning: Highest complexity requiring training infrastructure and expertise

4. Cost Considerations

- Prompt Engineering: Primarily inference costs

- RAG: Inference costs plus retrieval system maintenance

- Fine-Tuning: Training costs plus ongoing inference costs

This publication aligns with a broader trend of practical RAG guidance appearing across technical platforms. Just yesterday (March 28), another Medium article warned about RAG deployment bottlenecks, and on March 25, a developer shared a cautionary tale about RAG system failure at production scale.

Retail & Luxury Implications

For retail and luxury AI practitioners, this decision framework has significant practical implications:

Customer Service Applications

- Prompt Engineering: Best for standardizing tone and response format across customer service agents

- RAG: Essential for providing accurate information about new product launches, inventory availability, or policy changes

- Fine-Tuning: Useful for developing specialized product recommendation systems based on historical customer interactions

Product Knowledge Management

- RAG: The clear choice for maintaining up-to-date knowledge about materials, craftsmanship techniques, sustainability credentials, and brand heritage that evolves over time

- Fine-Tuning: Could help models understand nuanced relationships between product attributes and customer preferences specific to luxury markets

Content Generation

- Prompt Engineering: Sufficient for generating social media captions or email subject lines with consistent brand voice

- Fine-Tuning: Potentially valuable for creating product descriptions that capture specific aesthetic qualities unique to luxury goods

Internal Knowledge Systems

- RAG: Ideal for employee training systems that need to reference constantly updated operational procedures, compliance requirements, or vendor information

The framework helps avoid common pitfalls, such as attempting to fine-tune models for tasks better suited to RAG (like accessing current inventory data) or over-investing in RAG systems for simple prompt engineering tasks.

Implementation Considerations for Retail

Data Governance Requirements

Luxury brands face particularly stringent requirements around data privacy and intellectual property protection. RAG systems offer advantages here because:

- Proprietary knowledge can be kept in secure retrieval systems rather than baked into model weights

- Access controls can be implemented at the retrieval layer

- Source attribution is more transparent than with fine-tuned models

Multi-Brand Portfolio Management

For conglomerates like LVMH or Kering managing multiple brands, a hybrid approach often makes sense:

- Fine-Tune a base model on shared luxury market principles

- Use RAG for brand-specific knowledge retrieval

- Apply Prompt Engineering for campaign-specific adjustments

Seasonal Adaptation

Fashion's seasonal nature creates unique challenges:

- RAG excels at incorporating new seasonal collection information

- Fine-Tuning might help models understand seasonal trend patterns over time

- Prompt Engineering can quickly adapt to temporary promotional campaigns

Cost-Benefit Analysis

Recent enterprise trend data (from March 24) shows a strong preference for RAG over fine-tuning for production AI systems. This likely reflects:

- Lower initial investment compared to fine-tuning infrastructure

- Easier maintenance and updating of knowledge bases

- Better performance on tasks requiring current information

However, the optimal choice depends on specific use cases. A high-volume personalized recommendation system might justify fine-tuning costs, while a product Q&A system would benefit more from RAG.