In what might appear as a technical footnote but represents a fundamental architectural evolution, OpenAI has quietly deployed WebSocket Mode for its Responses API—a seemingly minor infrastructure update with potentially massive implications for how production AI systems are built and scaled. This new persistent connection protocol addresses a critical bottleneck in contemporary AI agent workflows, potentially reducing end-to-end latency by up to 40% for complex, multi-turn interactions involving heavy tool usage.

The Hidden Cost of Redundant Context

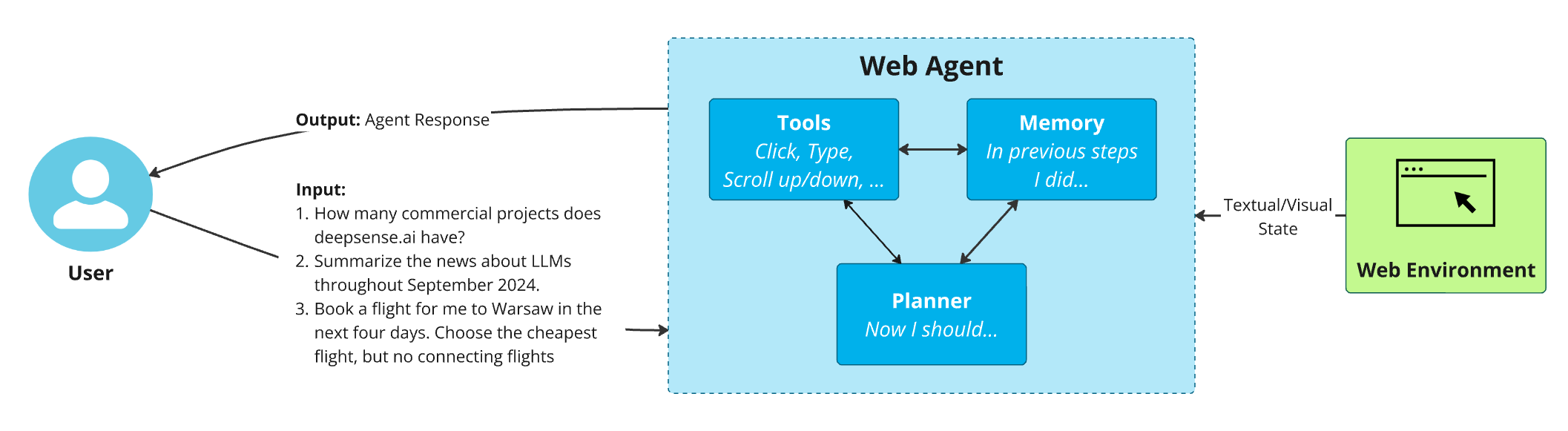

Most AI agentic workflows today operate on a protocol fundamentally designed for single-turn interactions. Every time an agent makes a tool call or continues a conversation, the entire conversation history—potentially thousands of tokens—gets resent to the model. The AI then reprocesses information it already knows, creating what developers call "context overhead" that compounds rapidly at scale.

"Every agent turn, you're resending the full context. Again," notes the Product Hunt announcement. "That overhead compounds fast." This invisible tax on infrastructure has become increasingly problematic as AI agents move from experimental prototypes to production systems handling millions of interactions.

How WebSocket Mode Changes the Game

WebSocket Mode introduces persistent connections between client applications and OpenAI's API infrastructure. Instead of establishing a new HTTP connection for each request-response cycle, clients maintain an open WebSocket connection, sending only incremental inputs rather than the entire conversation history with each turn.

This architectural shift represents more than just a performance optimization—it fundamentally changes the contract between developers and AI infrastructure. By eliminating redundant data transmission, the protocol:

- Reduces bandwidth consumption significantly

- Minimizes processing overhead on both client and server sides

- Enables more responsive interactions for complex multi-step workflows

- Lowers operational costs for high-volume applications

The Broader Context: AI Agents at an Inflection Point

This development arrives at a critical moment in AI evolution. According to recent analysis, AI agents crossed a critical reliability threshold in late 2026, fundamentally transforming programming capabilities and enterprise adoption patterns. As agents become more sophisticated and integrated into business workflows, infrastructure efficiency becomes paramount.

OpenAI's timing is strategic. The company recently secured a Pentagon contract to replace Anthropic's services and participated in closed-door government meetings about AI safety and regulation. These high-stakes deployments demand robust, efficient infrastructure capable of handling complex, multi-turn interactions reliably at scale.

Competitive Implications and Industry Impact

The WebSocket announcement comes amid intensifying competition in the AI infrastructure space. With OpenAI competing directly with Anthropic, Google, and other major players, infrastructure efficiency represents a new battleground beyond mere model capabilities. The company's partnerships with McKinsey & Company, Capgemini, Accenture, and Boston Consulting Group suggest enterprise deployment at scale is a primary focus.

This infrastructure optimization follows OpenAI's pattern of incremental but significant improvements to its developer platform. From Codex to GPT-4o and the rumored GPT-5.4 launch, the company has consistently balanced breakthrough model development with practical infrastructure enhancements.

Practical Implications for Developers

For developers building AI agents, WebSocket Mode offers tangible benefits:

- Reduced Complexity: Simplified connection management for long-running conversations

- Improved User Experience: Faster response times for tool-heavy workflows

- Cost Optimization: Lower token consumption for extended interactions

- Scalability: More efficient handling of concurrent agent sessions

The 40% latency reduction specifically targets "heavy tool-call workflows"—precisely the type of complex, multi-step processes that characterize advanced AI agents in production environments.

Looking Forward: The Infrastructure-Agent Coevolution

As AI agents become more sophisticated, their infrastructure requirements evolve in tandem. OpenAI's WebSocket Mode represents an acknowledgment that the stateless request-response paradigm of early AI APIs is insufficient for the stateful, persistent interactions that characterize advanced agents.

This development suggests a future where AI infrastructure increasingly resembles traditional application infrastructure—with persistent connections, state management, and optimized data flow becoming standard rather than exceptional. As rumors circulate about GPT-5.4 and potential intermediate version skips, infrastructure improvements like WebSocket Mode may prove as important as model advancements for real-world deployment.

Conclusion: More Than Just Faster Responses

OpenAI's WebSocket Mode for the Responses API represents what one observer called "quietly one of the more important shifts in how production agents get built." By addressing the fundamental inefficiency of resending full context with every turn, this update removes a significant barrier to scalable, responsive AI agent deployment.

In an industry often focused on model size and capability metrics, this infrastructure enhancement serves as a reminder that practical deployment considerations—latency, bandwidth, connection management—are equally crucial for AI's real-world impact. As AI agents move from novelty to necessity across industries, such optimizations will determine not just what's possible, but what's practical at scale.

Source: OpenAI WebSocket Mode for Responses API announcement on Product Hunt